In the first post of this series about virtual networking

More fun with veth, network namespaces, VLANs – I – open questions

I have collected some questions which had remained open in an older post series of 2017 about veths, unnamed network namespaces and virtual VLANs. In the course of the present series I will try to answer at least some of these questions.

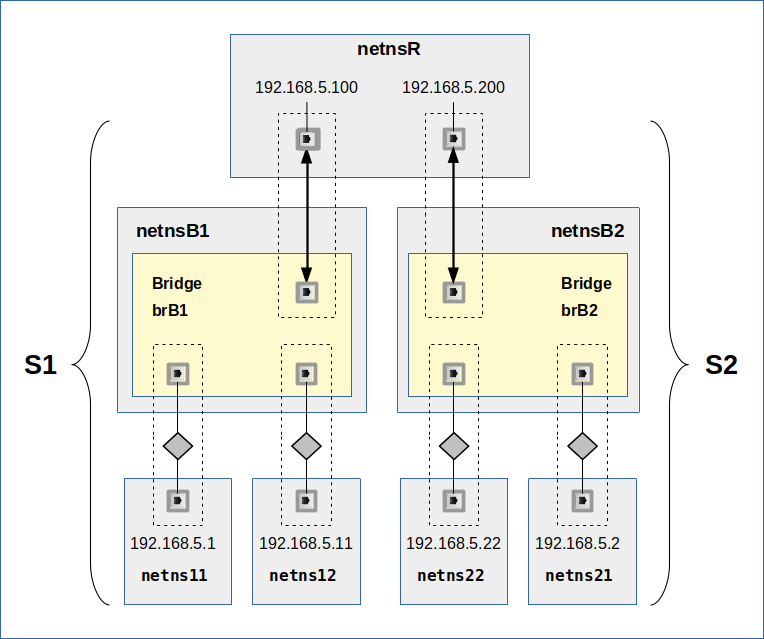

The topic of the present and the next two post will be a special network namespace which we create with an artificial ambiguity regarding the path that ICMP and even ARP packets could take. We will study a scenario with the following basic properties:

We set up two L2-segments, each based on a Linux bridge to which we attach two separate network namespaces by veth devices. These L2-segments will be connected (by further veths) to yet another common, but not forwarding network namespace “netnsR”. The IPs of all veth end-points will be members of one and the same IP-subnet (a class C net).

No VLANs or firewalls will be set up. So, this is a very plain and seemingly simple scenario: Two otherwise separate L2-segments terminate with border NICs in a common network namespace.

Note, however, that our scenario is different from the typical situation of a router or routing namespace: One reason is that our L2-segments and their respective NICs do not belong to different logical IP networks with different IP-broadcast regions. We have just one common C-class IP-subnet and not two different ones. The other other reason is that we will not enable “forwarding” in the coupling namespace “netnsR”.

In this post we will first try to find out by theoretical reasoning what pitfalls may await us and what may be required to enable a symmetric communication between the common namespace netnsR and each of the L2-segments. We will try to identify critical issues which we have to check out in detail by experiments.

One interesting aspect is that the setup basically is totally symmetric. But therefore it is also somewhat ambiguous regarding the possible position of IPs in one of the networks. Naively set routes in netnsR may break or reflect this symmetry on the IP layer. But we shall also consider ARP requests and replies on the Link layer under the conditions of our scenario.

In the next forthcoming post we will verify our ideas and clarify open points by concrete experiments. As long as forwarding is disabled in the coupling namespace netnsR we do not expect any cross-segment transfer of packets. In yet another post we will use our gathered results to establish a symmetric cross-segment communication and study how we must set up routes to achieve this. All these experiments will prepare us for a later investigation of virtual VLANs.

I will use the abbreviation “netns” for network namespaces throughout this post.

Scenario SC-1: Two L2-segments coupled by a common and routing namespace

Let us first look at a graphical drawing showing our scenario:

A simple way to build such a virtual L2-segment is the following:

We set up a Linux bridge (e.g. brB1) in a dedicated network namespace (e.g. netnsB1). Via veth devices we attach two further network namespaces (netns11 and netns12) to the bridge. You may associate the latter namespace with virtual hosts reduced to elementary networking abilities. As I have shown in my previous post series of the year 2017 we can enter such a network namespace and execute shell commands there; see here. The veth endpoints in netns11 and netns12 get IP addresses.

We build two of such segments, S1 and S2, with each of the respective bridges located in its own namespace (netnsB1 and netnsB2). Then we use further veth devices to connect the two bridges (= segments) to a common namespace (netnsR). Despite setting default routes we do not enable forwarding in netnsR.

The graphics shows that we all in all have 7 network namespaces:

- netns11, netns12, netns21, netns22 represent hosts with NICs and IPs that want to communicate with other hosts.

- netnsB1 and netnsB2 host the Linux bridges.

- netnsR is a non-forwarding namespace where both segments, S1 and S2, terminate – each via a border NIC (veth-endpoint).

netnsR is the namespace which is most interesting in our scenario: Without special measures packets from netns11 will not reach netns21 or netns22. So, we have indeed realized two separated L2-segments S1 and S2 attached to a common network namespace.

The sketch makes it clear that communication within each of the segments is possible: netns11 will certainly be able to communicate with netns12. The same holds for netns21 and netns22.

But we cannot be so sure what will happen with e.g. ARP and ICMP request and/or answering packets send from netnsR to one of the four namespaces netns11, netns12, netns21, netns22. You may guess that this might depend on route definitions. I come back to this point in a minute.

Regarding IP addresses: Outside the bridges we must assign IP addresses to the respective veth endpoints. As said: During the setup of the devices I use IP-addresses of one and the same class C network: 192.168.5.0/24. The bridges themselves do not need assigned IPs. In our scenario the bridges could at least in principle have been replaced by a Hub or even by an Ethernet bus cable with outtakes.

Side remark: Do not forget that a Linux bridge can in principle get an IP address itself and work as a special NIC connected to the bridge ports. We do, however, not need or use this capability in our scenario.

Regarding routes: Of course we need to define routes in all of the namespaces. Otherwise the NICs with IPs would not become operative. In a first naive approach we will just rely on the routes which are automatically generated when we create the NICs. We will see that this leads to a somewhat artificial situation in netnsR. Both routes will point to the same IP-subnet 192.168.5.0/24 – but via different NICs.

Theoretical analysis of the situation of and within netnsR

Both L2-segments terminate in netnsR. The role of netnsR basically is very similar to that of a router, but with more ambiguity and uncertainty because both border NICs belong to the same IP-subnet.

Segment separation in the coupling namespace netnsR?

Let us call the NICs of netns11, netns12 the “inner NICs” of segment S1 and netns21, netns22 the “inner NICs” of S2. We instead call the NICs in netnsR with the IPs 192.168.5.100 and 192.168.5.200 “border NICs” of the respective L2-segments.

As long as we do not enable “forwarding” explicitly in netnsR we feel safe to assume that no ICMP or TCP/IP packet will be transferred from an inner NICs of one segment to an inner NIC of the other segment (for ARP packets see below). For very basic reasons a packet transfer between L2-segments behind different NIC devices in one and the same network namespace must be allowed explicitly. By default the Linux kernel will not allow for such a transfer.

But due to the fact that the border NICs reside in the same namespace we have to be a bit more precise: No packet coming from L2-segment S1 will enter the L2-segment behind (!) the border NIC of S2 – and vice versa. However, as the border NICs belong to the same namespace it might be that they can receive e.g. ICMP and ARP packets both from each other and from inner NICs of the segments. We should test this point via an experiment.

The coupling namespace netnsR as a sender of ICMP and ARP request packets? Ambiguity and dependency on routes?

Let us focus on the role of netnsR as the sender of network packets to other external namespaces in the two attached L2-segments. This could be packets to initiate a TCP/IP communication, it could be packets for ICMP and ARP requests, but also answering packets to such requests – e.g. ARP-replies to ARP-requests (ARP), which came from one of the L2-segments.

TCP/IP and ICMP requests will typically try to determine the MACs of their target IPs if they find no entries for these IPs in the ARP cache tables. So, we certainly have to look at ARP requests in netnsR.

Let us assume that we want to test the existence and activity status of a certain IP within our IP-subnet. Which of the two available NIC devices should netnsR use to send a respective ICMP-request? In particular if the ARP table is empty? We are faced with an ambiguity.

Furthermore: Which of the border NICs should netnsR use to send the Ethernet broadcasts for the initially required ARP-request?

To be able to give a positive answer even we humans would need something like a relation “target-IP vs. NIC device“. Ok, this kind of information is typically an element of a defined “route“. So what about routes in netnsR?

Again, in contrast to standard router situations the IPs in netnsR belong to one and the same IP-subnet. Therefore netnsR (and any networking application there) may find itself in a problematic position. If the IP-subnets of our two segments were different the route definitions would be clear and unique per segment. But in our case? Remember, we do not define any route explicitly during setup … We trusted instead in some automatism of the Linux system.

Whenever we assign a certain IP and a netmask to a NIC in a Linux network namespace a basic routing rule is automatically established at the same time. Thus, it may well happen that two almost identical routes to 192.168.5.0/24, but via different interfaces will appear after the setup of the veth endpoints in netnsR! A very dubious situation … Would these routes contribute to a resolution of the obvious ambiguity for ICMP and ARP requests in netnsR?

Side aspect: Two otherwise identical routes to the same IP-subnet via different interfaces?

Those readers who are familiar with the command “ip route add” will now tell me that this command declines the placement of a route in the routing tables if a route to the same group of target IPs is already defined. We get an explicit warning, too. yes, but the kernel and even the basic command “route add” behave differently and for example do allow the creation of two routes to one and the same IP-subnet via different interfaces – even if all other route parameters as e.g. the distance are identical!

Could route selection by the kernel lead to asymmetries?

We may hope that only the first one of two otherwise identical routes through different interfaces will be taken and used by the Linux kernel. But if this were true, the selected route would break the symmetry of the setup!

Then netnsR would (maybe) able to establish full communication to NICs in one of the segments, but not to the other. However, I would not bet on such an outcome without experiments …

On the other side: If we removed all routing rules in netnsR the situation would really become hopeless. We take this insight as a hint that routing rules in netnsR may indeed be the right means to enable a symmetric communication ability of netnsR to each of the segments. However, the routes must probably be formulated in a reasonable and specific way for the target IPs in the different segments. A reference only to the common IP-subnet will not help in our special case. We probably need IP-specif routes.

Could such individual rules for target IPs help to enable at least to establish a symmetric communication between netnsR and NICs in either segment? We are optimistic … But a new problem lurks in the background: Why should routes affect the initial RP requests on which the rest depends? ARP works on the Link layer … See below!

Now, you may say that our scenario is an artificial one. However, we will later see that similar ambiguities as in our scenario will naturally come up in namespaces with attached VLAN-interfaces of veth devices.

Side aspect: Quadratic effort to set up IP-specific routes

I admit that most Linux users would never in their life work with separate routes to individual IPs. This would come with a lot of effort. Note that IP-specific routes would also be required in the the segments’ namespaces. Therefore, the effort would grow quadratically with the number of NICs. Fortunately, we will learn in one of the next posts that we can reduce the effort to a linear one by using Proxy-ARP at netnsR.

Situation from the perspective of the segments’ network namespaces with just one NIC

Let us look at the situation of a specific standard namespace attached to one of the bridges. We take netns22 as an example. It is clear that netns22 should be able to communicate with netns21 by any protocol on any layer of the TCP/IP layer model. Including the ARP protocol.

But what about communication of netns22 with netnsR? Not so clear … From the discussion above we may assume that such a communication on the ICMP level may strongly depend on the routing rules established in netnsR. Reply packets may not reach netns22 … We would at least regard this possible for the ICMP protocol. For a discussion of ARP requests and replies see a separate section below.

Segment separation and the border NICs

Another interesting question is:

How far does the separation of the segments in netnsR really reach regarding the segments’ border NICs?

Both border NICS (with IPs 192.168.5.100 and 192.168.5.200) are members of the same namespace. Assume that netnsR is enabled to communicate with either of the segments. Would ICMP and ARP requests e.g. from segment S2 to “192.168.5.100” not reach this border NIC of S1 – and be answered because netnsR regards this as one of its addresses?

I think this is more than probable … at least with default network parameters of the Linux kernel. But we should check out whether my opinion is true by a dedicated experiment.

We should also investigate whether and by what means we could change this behavior. The reason is made clear by the following question: Why should we deliver information about the MAC of border NICs of other separate network segments to a segment from which we do not forward TCP/IP-packets?

ARP-packet transfer and possible ambiguities on the ARP-level?

Is ARP a layer 2 protocol? The formal answer is yes. RFC 1122 tells us that ARP is part of the Link Layer. To my understanding this definition refers to two things:

- ARP operates with Ethernet broadcasts for requests and (under normal conditions) with Ethernet unicast packets for replies, i.e. it clearly uses basic layer 2 properties within a LAN-segment.

- The ARP payload within Ethernet packets is not encapsulated in IP-packets. No layer 3 packet structures are used by ARP.

In contrast e.g. to ICMP: According to RFC 792 & 1122, ICMP is one of multiple core protocols of the IP protocol suite, which comprises IP, ICMP and IGMP. ICMP can be regarded as an integrated part of IP. ICMP uses IP the same way as higher level protocols.

This having said, I, personally, would say that ARP actually reaches a bit beyond layer 2. It certainly operates on layer 2 regarding the sending of broadcast packets and the ARP specific payload. But:

Its logic goes somewhat beyond layer 2 of the TCP/IP stack, i.e. beyond the Link layer. Despite the fact that extracting an ARP payload only requires Link layer capabilities, the receiving host or namespace must evaluate layer 3 information. It must check its own IP addresses for the IP send with the ARP-request. If it finds that the IP in question is one of its own and if it according to all rules and settings therefore should send a reply (with an identified MAC for the IP), it has to encapsulate the result in new Ethernet packets. And afterward decide into which segment it should send the reply …

Well, you would say the last point is trivial: The ARP request passed a certain MAC at arrival which ARP just needs to remember. And we send the reply though the respective interface, i.e. through the interface where the ARP-request had arrived. Note that this MAC need not be identical to the MAC which is sent as a reply! A namespace may also send MACs for other NICs than the border NIC of the requesting segment if the IP sent in the ARP-requests fits any of these NICs.

But what if a defined route clearly says something different concerning the interface by which the requestor’s IP should be reached? What if the entry in the ARP cache table contradicts route definitions? I see you thinking … We better do not trust our intuition and check this point out by an experiment.

And you see the next problem for future posts: What if a MAC/IP tuple does not unambiguously identify an interface as we expect it for veth VLAN interfaces?

ARP replies in case of forwarding?

ARP realizes its requests regarding the MAC of a given IP via Ethernet (!) broadcasts. Ethernet packets and in particular Ethernet broadcasts are limited to the L2-segment in which they originate. (Due to their nature as electromagnetic waves). From this point of view ARP packets coming for e.g. from netns11 requesting the MAC of the NIC in netns22 (192.168.5.2) will never reach their target (with disabled forwarding in netnsR). And thus remain unanswered. Despite the fact that we may have a valid entry for the target IP (of the other segment) in the ARP cache table, already …

The other question is if a decline of an ARP-reply remains true even if when forwarding gets enabled. If the formal layer 2 assignment of ARP is of any practical value, we would assume the following: S1 and S2 would remain separated on the ARP level – even with a forwarding netnsR …

But could it not be meaningful to indicate to the requestor that our namespace knows where to find the requested IP?

Especially, as all the NICs in our scenario are in the same IP-subnet.

Would it not make sense to send ARP replies via some “magic” in the common namespace netnsR as soon as someone enables “forwarding”? Hmmm …

The designers of network protocols are very economical people and would provide such “magic” only if necessary or if explicitly asked for by an admin. Regarding the requested MAC: After some reasoning you may come to the conclusion that there is no real necessity to provide exactly the MAC for the IP in question – even when forwarding is enabled on a routing host or in a routing namespace. But what about the MAC of the interface by which the request entered the forwarding namespace? See below.

Anyway, we get the feeling that we must also investigate our scenario with forwarding enabled!

Forwarding between the segments and requirements for cross-segment communication

Let us assume that we want to use the common network namespace netnsR to really connect the two segments S1 and S2 – and enable TCP/IP communication between all NICs in these segments. With clear routing rules telling netnsR which packet to route through which of the available NIC devices, this should be possible for information on layers 3 and above. Why?

Well, as soon as netnsR gets the role of a real forwarding router it would extract the enclosed IP-packets from the arriving Ethernet packets, determine the defined route to the destination (by looking into a routing table) and hopefully create Ethernet packets in the correct destination segment with the help of its border device.

But this also means: On level 3 and higher the routing information is decisive and sufficient to forward packets in the right direction and through the right device within any routing namespace or on a routing host. We do not need ARP packets to cross the borders of segments.

This in turn means that it is most probable in our scenario that ARP requests from one segment regarding IPs in the other segment will remain unanswered under normal conditions.

Again a BUT: There is something like Proxy ARP. What about this piece of networking in our context?

Gateway definitions in the inner namespaces

Before I come to an answer regarding Proxy ARP: Let us assume active forwarding in netnsR. Would exact and detailed routing rules in netnsR be sufficient to establish a full communication between the segments? The answer is: Probably NOT.

Reason: On any host you must define a default gateway which is responsible to forward packets to other Ethernet segments which belong to different IP nets or other networks. More generally: The default gateway defines a border host or device which we need to pass to reach IPs in other networks or segments. There is no real reason to think that this would be different in our special case. Also there each of the veth endpoints with IPs 192.168.5.100 and 192.168.5.200 must play a role as a gateway.

Therefore, the following is very plausible, if we wanted to achieve cross-segment communication in our scenario: We must define the border NIC of each of our segments as a default gateway to IPs that are located in the other segment. A respective gateway rule must be added to each of the namespaces netns11, netns12, netns21, netns22. In particular, because ARP requests for IPs in the other segment really would remain unanswered under normal conditions.

Proxy ARP

Proxy ARP, in my understanding, ensures that ARP requests are answered in situations like in our scenario, but with forwarding enabled. I.e. to answer an ARP request for an IP outside the LAN-segment where the request has its origin. See also the explanation here and here. On a typical Linux host Proxy ARP is not enabled. We must explicitly activate it for a routing and forwarding network namespace.

How would such an ARP-request packet be answered by Proxy ARP? Well, by delivering the MAC address of the router/gateway which is able to transfer TCP/IP-packets to the target IP. So, in our case Proxy ARP would help to address the routing namespace when a host/namespace in segment S1 wants to reach a namespace/host in segment S2. Thus, it appears possible that with Proxy ARP enabled we could establish a communication between the two segments without explicitly defining a default gateway in netns11, netns12, netns21, netns22. As explained before this would reduce the work load coming with IP-specific routes. We also have to check this out experimentally!

Summary

We have defined a scenario in which two otherwise separated L2-segments get connected to a common network namespace “netnsR”. Our example is a bit unusual as all IPs in this scenario belong to one and the same IP-subnet. “Theoretical” considerations have led us to the following assumptions:

- We expect that automatically create routes for the border NICs may break the symmetry of our otherwise symmetric arrangement of coupled L2-segments.

- We expect that routing rules are required in the namespace “netnsR” to enable a symmetric communication of netnsR with the two attached segments – even without forwarding enabled. The routes must probably be specified on the detail level of each individual target host or namespace. Such rules could at least enable a symmetric communication of netnsR to NICs in either segment.

- We expect that detail routes for individual IPs are even required to direct ARP-request into the right segment. Note that this already would mean that there is a clear impact of routes on ARP in ambiguous interface situations! We are, however, not so sure whether routes have an impact on ARP-replies, yet …

- We would like to limit ARP-replies to border NICs as long as we do not enable forwarding. But we do not know yet how to achieve this.

- Cross-segment communication requires the explicit activation of “forwarding” in netnsR.

- We expect that ARP-requests will not cross the borders of a L2-segment and will not be forwarded. We therefore expect that ARP-requests originating in one segment for MAC addresses of IP devices in the other segment will remain unanswered (with deactivated Proxy ARP in netnsR).

- With disabled Proxy ARP we probably must define the border NIC of either segment as a default gateway for all the namespaces/hosts attached to the segment. Otherwise cross-segment communication would not work even if we enabled forwarding in netnsR.

- Proxy ARP, enabled in netnsR together with clear routes, should answer ARP requests coming from a L2-segment for an IP outside the segment with the MAC of the segment’s border device in the routing namespace. (This may make a gateway definition in the non-routing namespaces unnecessary.)

These are expectations based on reasonable assumptions, at least in my opinion. But as one of my readers said: Only experiments will tell us what really happens. The setup of our scenario and related concrete experiments are the topic of the next post in this series. See:

The attentive reader has, of course, noticed that such experiments would also deliver some first answers to questions which may have remained open in my discussion of various network scenarios in my old post series on veths and namespaces (see posts VII and VIII there).