During the last two posts of this series

More fun with veth, network namespaces, VLANs – IV – L2-segments, same IP-subnet, ARP and routes

we have studied a Linux network namespace with two attached L2-segments. All IPs were members of one and the same IP-subnet. Forwarding and Proxy ARP had been deactivated in this namespace.

So far, we have understood that routes have a decisive impact on the choice of the destination segment when ICMP- and ARP-requests are sent from a network namespace with multiple NICs – independent of forwarding being enabled or not. Insufficiently detailed routes can lead to problems and asymmetric arrival of replies from the segments – already on the ARP-level!

The obvious impact of routes on ARP-requests in our special scenario has surprised at least some readers, but I think remaining open questions have been answered in detail by the experiments discussed in the preceding post. We can now move on, on sufficiently solid ground.

We have also seen that even with detailed routes ARP- and ICMP-traffic paths to and from the L2-segments remain separated in our scenario (see the graphics below). The reason, of course, was that we had deactivated forwarding in the coupling namespace.

In this post we will study what happens when we activate forwarding. We will watch results of experiments both on the ICMP- and the ARP-level. Our objective is to link our otherwise separate L2-segments (with all their IPs in the same IP-subnet) seamlessly by a forwarding network namespace – and thus form some kind of larger segment. And we will test in what way Proxy ARP will help us to achieve this objective.

Not just fun …

Now, you could argue that no reasonable admin would link two virtual segments with IPs in the same IP-subnet by a routing namespace. One would use a virtual bridge. First answer: We perform virtual network experiments here for fun … Second answer: Its not just fun ..

Our eventual objective is the configuration of virtual VLAN configurations and related security measures. Of particular interest are routing namespaces where two tagging VLANs terminate and communicate with a third LAN-segment, the latter leading to an Internet connection. The present experiments with standard segments are only a first step in this direction.

When we imagine a replacement of the standard segments by tagged VLAN segments we already get the impression that we could use a common namespace for the administration of VLANs without accidentally mixing or transferring ICMP- and ARP-traffic between the VLANs. But the results in the last two previous posts also gave us a clear warning to distinguish carefully between routing and forwarding in namespaces.

The modified scenario – linking two L2-segments by a forwarding namespace

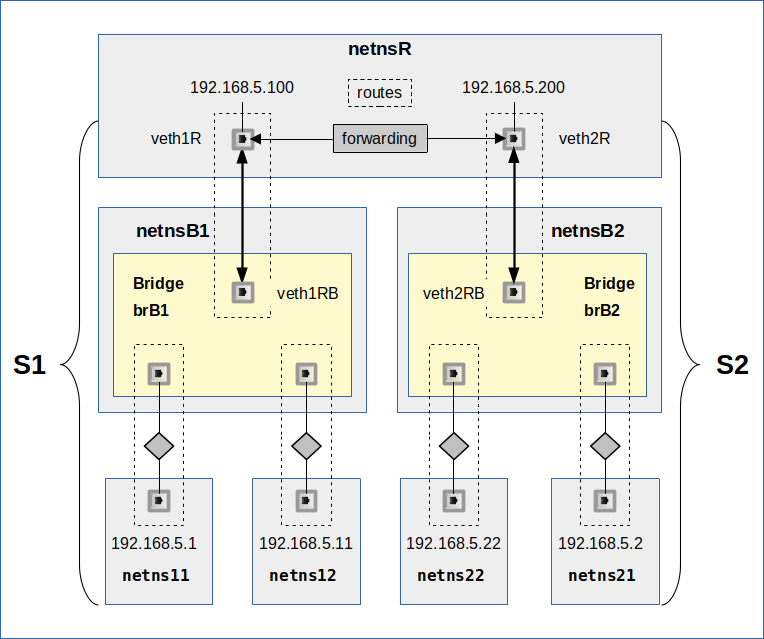

Let us have a look at a sketch of our scenario first:

We see our segments S1 and S2 again. All IPs are memebers of 192.168.5.0/24. The segments are attached to a common network namespace netnsR. The difference to previous scenarios in this post series lies in the activated forwarding and the definition of detailed routes in netnsR for the NICs with IPs of the same C-class IP-subnet.

Our experiments below will look at the effect of default gateway definitions and at the requirement of detailed routes in the L2-segments’ namespaces. In addition we will also test in what way enabling Proxy ARP in netnsR can help to achieve seamless segment coupling in an efficient centralized way.

Theoretical consideration: Linking of two segments by a routing and forwarding namespace vs. linking by a bridge

Normally, one would couple two virtual L2-segments by a virtual Linux bridge and get a bigger coherent segment. But there is a fundamental difference, at least in theory, in comparison to a coupling via a forwarding namespace:

While a bridge (without filter rules) would allow ARP-traffic and the transfer of ARP-packets from one segment to another this will not be the case across the forwarding namespace.

Forwarding should work on the IP layer and above, only. ARP-broadcasts should stick to the segments were they were generated. ARP-replies should not come from NICs residing in another segment attached to the coupling namespace. If we had physical segments attached to a host this would be a somewhat trivial statement. However, in all our scenarios all segments and coupling elements are virtual.

Keeping ARP within virtual LAN- and VLAN-segments is a matter of networking security. When we later want to separate some segments attached to a common network namespace via virtual VLAN-tagging we want to exclude any information exchange on the ARP-level. Even if forwarding is enabled. We can trust in the Linux kernel to do the right things, but its instructive to check the ARP-traffic in the wake of ICMP-traffic between our virtual LAN-segments (of the same IP-subnet) first. We can test ARP-packet transmission also by executing arpings to NICs in the other segment.

The routing and forwarding network namespace, netnsR, may update its ARP-caches and MAC-IP-interface relations. But it should not transfer ARP-traffic between virtual segments. And ARP-packages going out from the coupling namespace netnsR must still find their individually right segment for the intended target. We saw already that this requires detailed routes – even if the NICs in both segments belong to one and the same IP-subnet. Detailed routes in netnsR guide both the emission of ARP-requests via Ethernet broadcasts and ICMP-requests into the right segment.

Theoretical consideration: The role of Proxy ARP

According to our theoretical considerations an ARP-request from a NIC in segment S1 to a NIC in segment S2 should remain unanswered. Even with forwarding enabled. In some configurations this may be helpful in the sense of obfuscation, in other scenarios not. Activated Proxy ARP will enable answers from a coupling namespace by replying with the MAC of the namespace’s border NIC in the segment of the MAC-requesting NIC. See here. What is this good for?

Well, it simply indicates a router. A ICMP-request for an IP in the other segment will be directed to the MAC a precursor ARP-request has identified. With Proxy ARP activated in netnsR the replied MAC would be the border-NIC of the segment which sent the ARP-request. The namespace (namelyy netnsR) afterward receiving the Ethernet packet with the encapsulated IMP-request should know what to do with it. This would also allow for routed, forwarded traffic – even if we had not defined default or specific routers during the NIC-setup of all hosts/namespaces attached to the segments. We will check this assumption by our experiments.

It is an interesting question whether Proxy ARP would work in a Linux network namespace without having forwarding enabled. The experiments will show that this is not the case.

Side remark: Proxy ARP can also be used as an element of honey traps in security relevant setups. On the other side a wrongly or incompletely configured router with Proxy ARP can become a target for DoS-attacks.

Theoretical consideration: Discrimination between routing and forwarding?

Very often these terms are used as almost synonyms in textbooks discussing routers. But our experiments have shown that there is a subtle difference when talking about network namespaces: A network namespace which needs to talk to NICs in attached segments sends or routes its ARP- and ICMP-requests into one of the segments according to a selected defined route. It routes in this sense even if forwarding is disabled. Routes do have an impact on the network actions already in non-forwarding namespaces.

Setup of the scenario’s configuration and enabling forwarding in the coupling network namespace

In one of the previous posts I have provided commands to set up the elements of our scenario. See the PDF for the extended setup with routes there. The only thing missing is the activation of forwarding. We enable forwarding for IPv4 in a network namespace by

netnsR:~ # echo 1 > /proc/sys/net/ipv4/ip_forward

or

netnsR:~ # echo 1 > /proc/sys/net/ipv4/conf/all/forwarding

just like on any Linux host. You may execute one of these commands in a terminal window where you have accessed namespace “netnsR” via the command “nsenter”.

Note that these settings are specific and local for the network namespace! Despite the impression that the absolute paths appear to tell something different. Bind mounting of the hosts “/” to the namespace’s “/” and mounting of a virtual “/proc/sys/net/ipv4/” directory specific for the network namespace make this possible. Your network settings by manipulating files in “/proc/sys/net” or by “syctl” have local effects in your network namespace. They do not change settings on the Linux host or in other network namespaces!

In case we need to be more specific in more complex scenarios we can enable forwarding NIC or interface specific for the devices listed in the namespace’s directory “/proc/sys/net/ipv4/conf/”.

Echoing a “0” instead of a “1” will deactivate forwarding again.

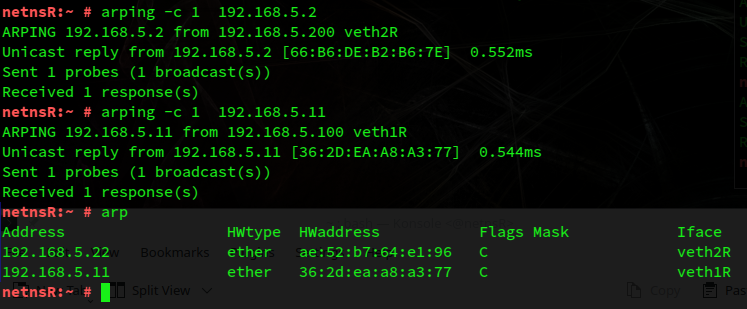

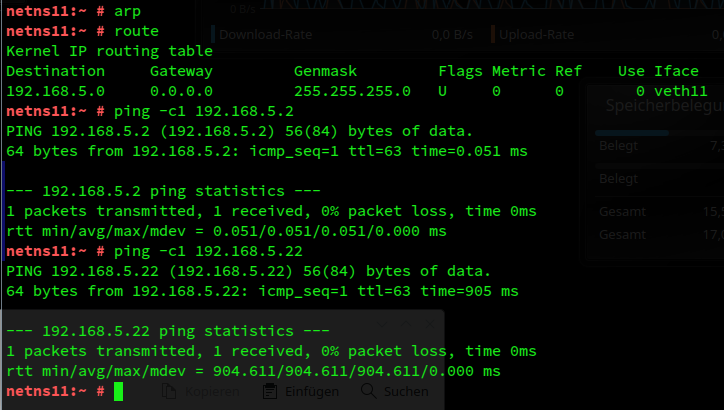

Experiment 1: arpinging from netnsR, netns12 and netns22

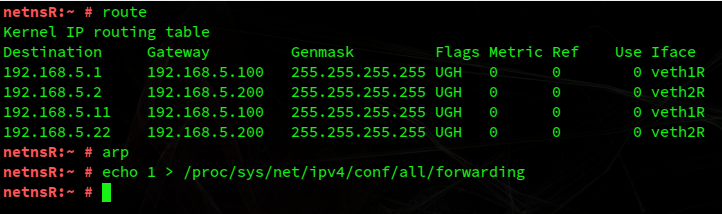

We first check the routes and the ARP cache in netnsR:

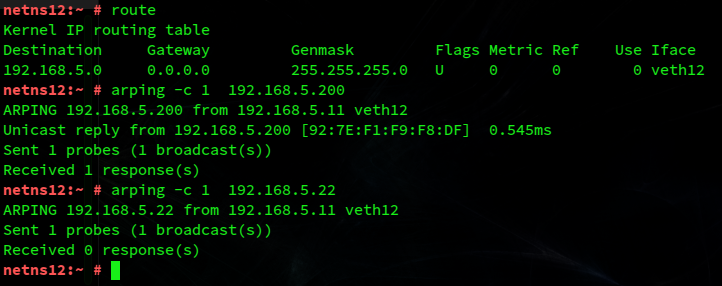

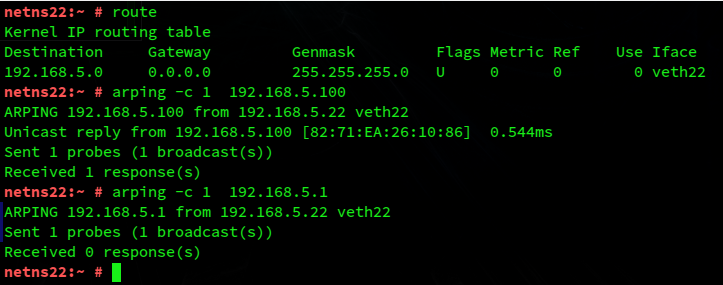

According to the results of my previous post and the considerations above we expect that arping-requests from netns12 and netns22 for IPs in netnsR get an answer. Also ARP-requests from netnsR for IPs in the segments S1 and S2 should receive a reply. However, requests to IPs in the other segment should not receive any reply. And indeed:

In contrast to the results in my previous post we see both the expected positive impact of our detailed routes and the resulting symmetry in the replies with respect to the segments.

Experiment 2: ICMP-requests from netns12 to netns22

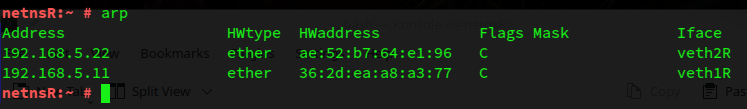

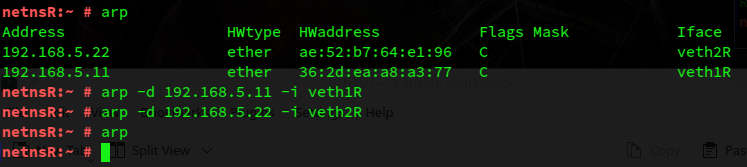

Let us look at the ARP cache entries in netnsR:

Remember that these entries resulted from the ARP-requests of netns12 and netns22. And not from replies received for arpings sent from netnsR. We saw in the last post that the Linux kernel regards replies to ARP-requests sent from userspace as unsolicited – and, therefore, does not update its cache. Anyway, let us delete the entries of the cache:

Without showing screenshots for it, we also empty the ARP caches of netns11, netns12, netns21, netns22.

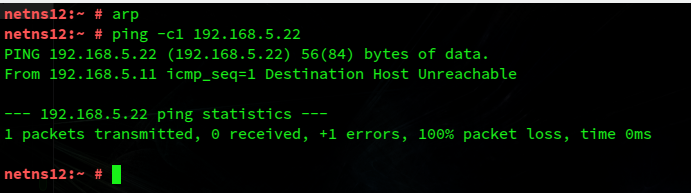

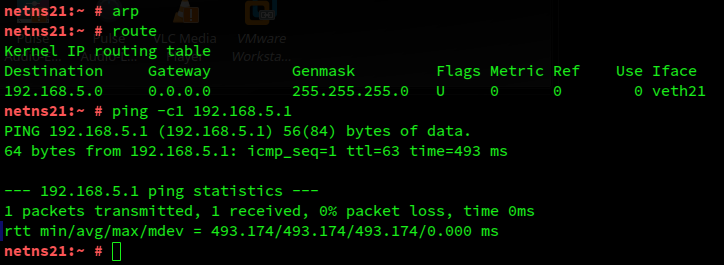

Well, we have forwarding enabled in netnsR. So, will an ICMP-request from netns12 (192.168.5.11) to netns21 (192.168.5.2) work? No, it does not.

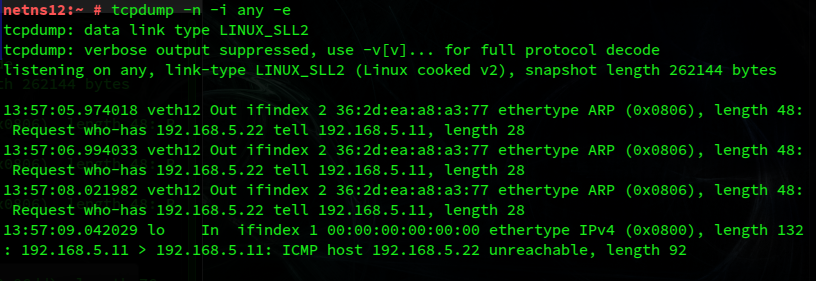

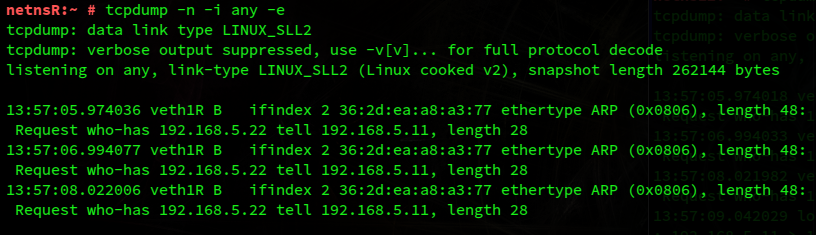

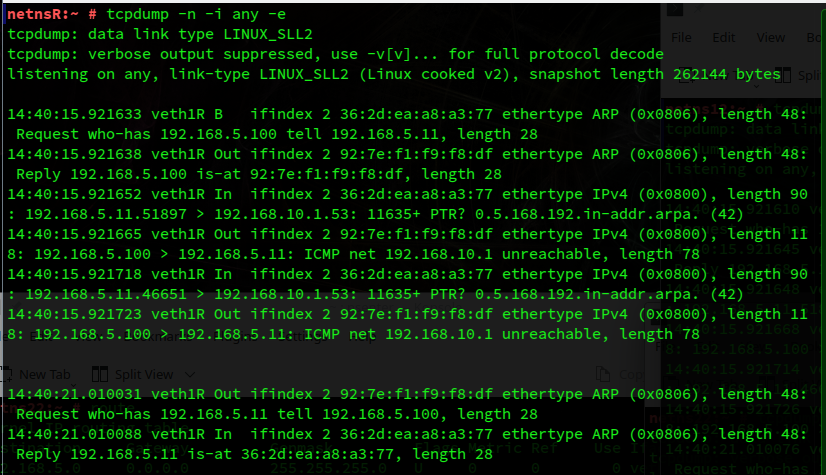

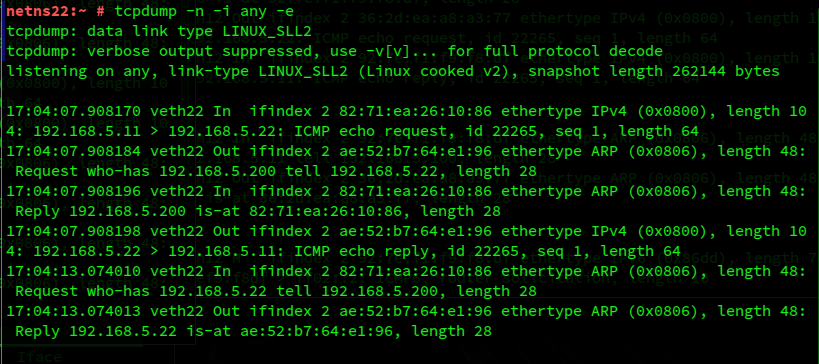

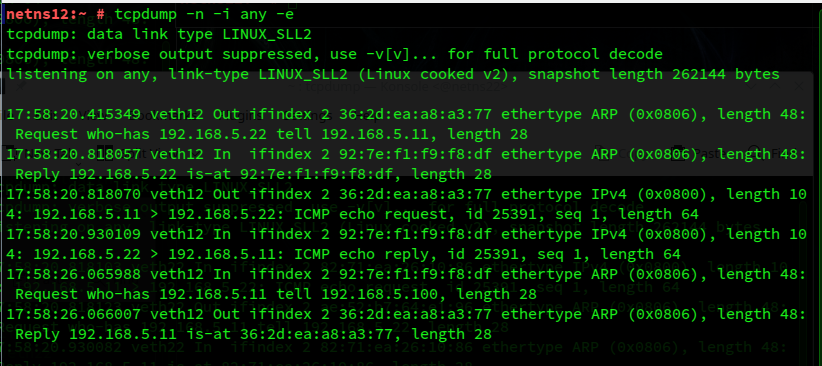

Why? tcpdump reveals the reason:

You have certainly guessed it: netns12 has not the slightest idea that it needs a router to communicate with certain IPs of its own IP-subnet. No default gateway was defined either.

No effect of a default gateway

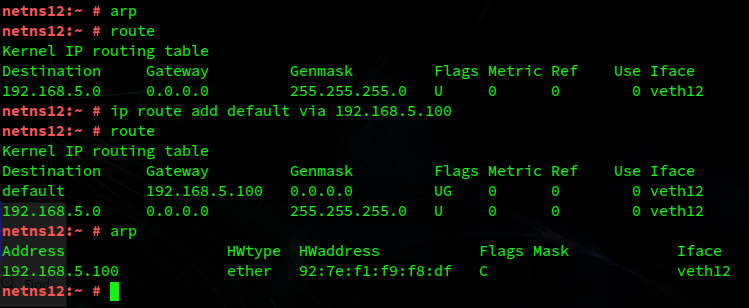

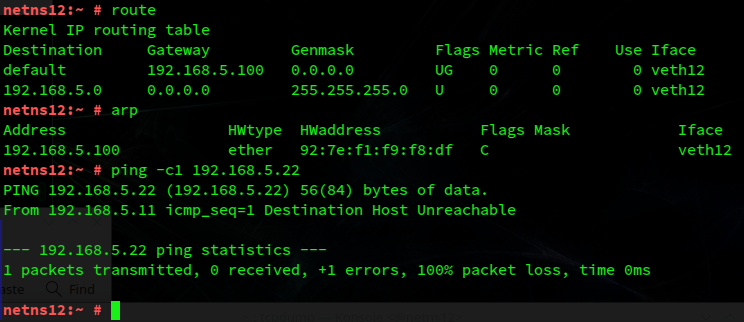

OK, let us define a default gateway in netns12:

Our namespace reacts to this relatively quickly. More precisely to the second route-command: Its first automatic action is to look for a DNS-server.

The information for which IP to look were taken from “/etc/resolv.conf” of the host (!), on which the namespaces are created. Note that this behavior may lead to a potential break of the assumed isolation of a network namespace! E.g., if netnsR had a default gateway route leading to an active DNS server.

However, the creation of a separate and isolating DNS-configuration for a bunch of unnamed namespaces via bind mounts is off the scope of this post. For setting up a DNS-service, e.g. based on “bind”, for named namespaces via “ip netns” see respective articles on the Internet.

Anyway, does the definition of a default gateway help regarding our ICMP-request? We have, of course, to set such a gateway also in netns22. But no, these extra definitions would not help!

Reason: The route for 192.168.5.0/24 is, of course, still in place and used as a base for all actions, e.g in netns12. The resulting precursor ARP-requests for the MAC of 192.168.5.22, which is required to create an appropriate Ethernet packet for the ICMP-payload, remains without reply as before.

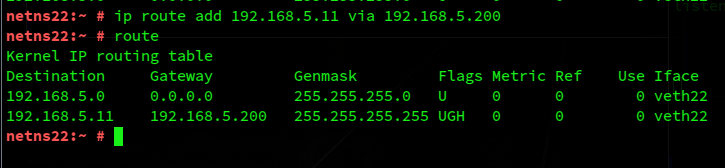

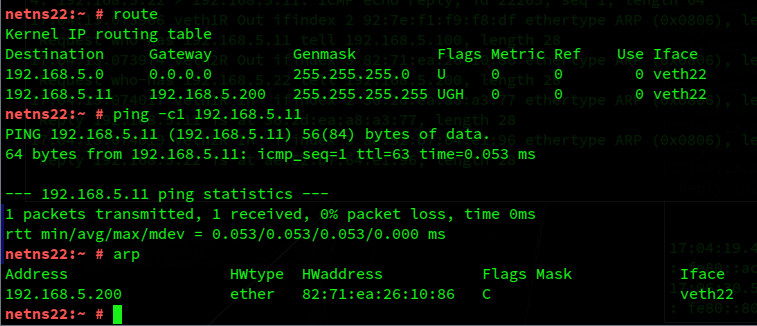

Experiment 3: ICMP-requests from netns12 to netns22 and reverse – with detailed routes in all affected netns

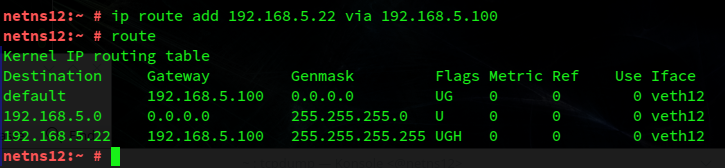

netns12 in our experiments, obviously, needs a route information saying that the the NIC with 192.168.5.22 (or 192.168.5.2) can only be reached via our forwarding namespace, that is via 192.168.5.100. netns12 must know which IP and related MAC is has to use to send the ICMP-request to for routing and forwarding. In other words: We must define a specific route to our target IP including the IP of the gateway NIC for forwarding. This requires a definition via a command like

ip route add 192.168.5.22 via 192.168.5.100

From the last post we know that between various fitting routes to a target the most specific one will be taken by the Linux kernel.

Thinking ahead, we, of course, need a the same kind of route specification in the namespace addressed by the ARP-request, i.e. in netns22 and there for the NIC with 192.168.5.22.

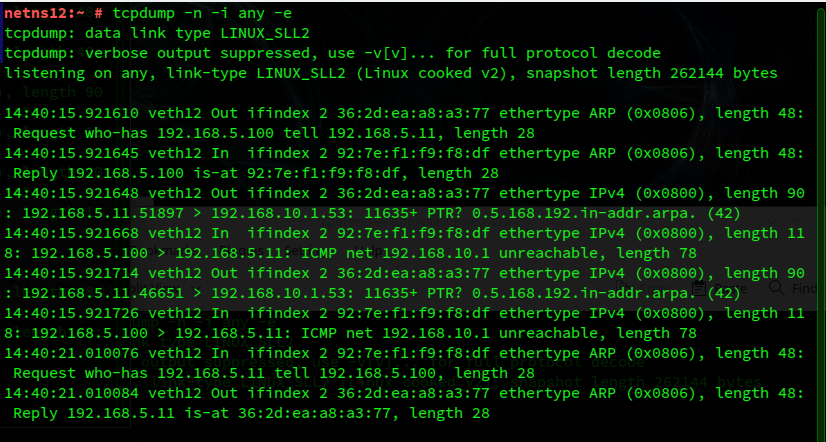

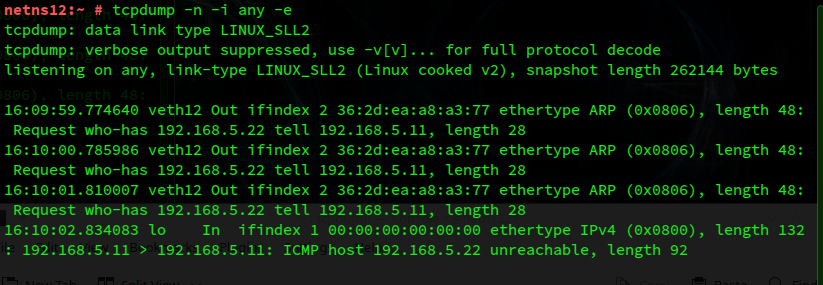

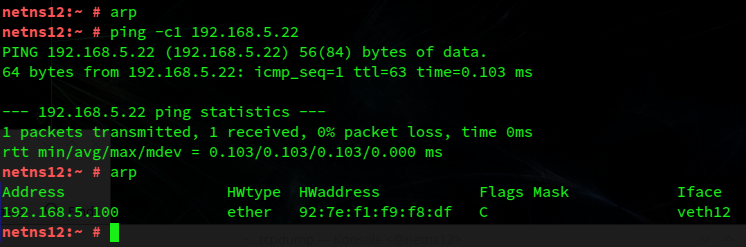

Note that we did not even define a default gateway there. Now let us clear all ARP caches on netns12 and netns22 again. Afterward we run the ICMP-request again:

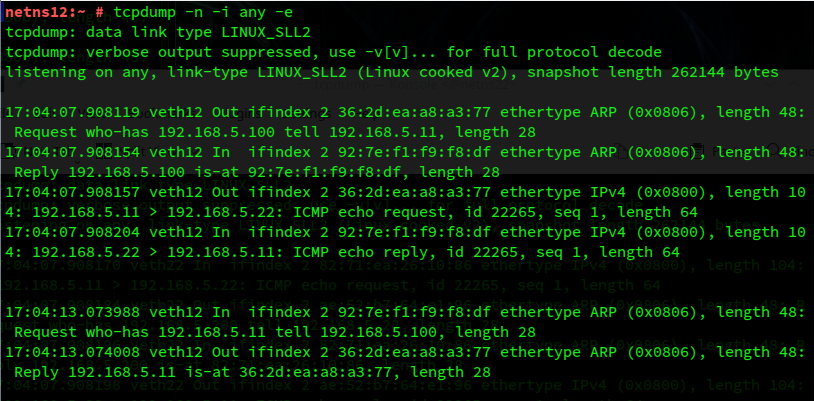

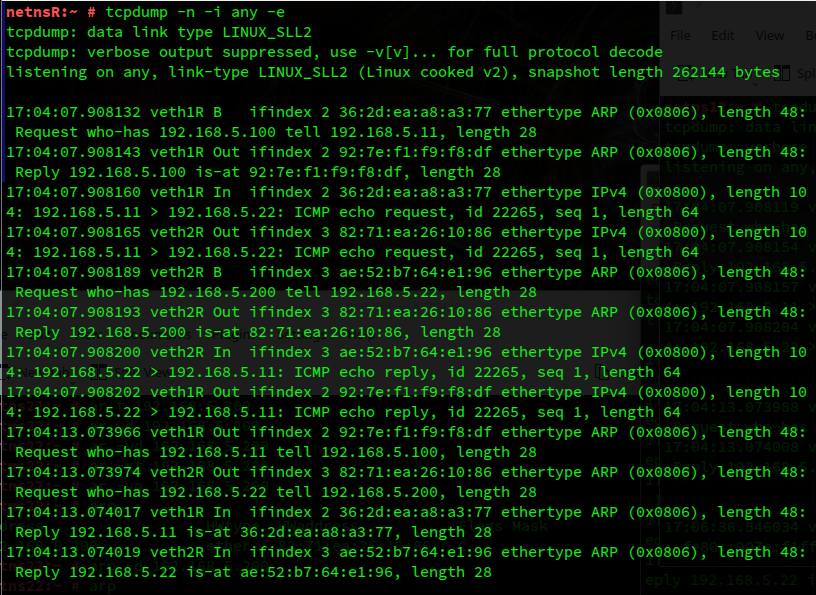

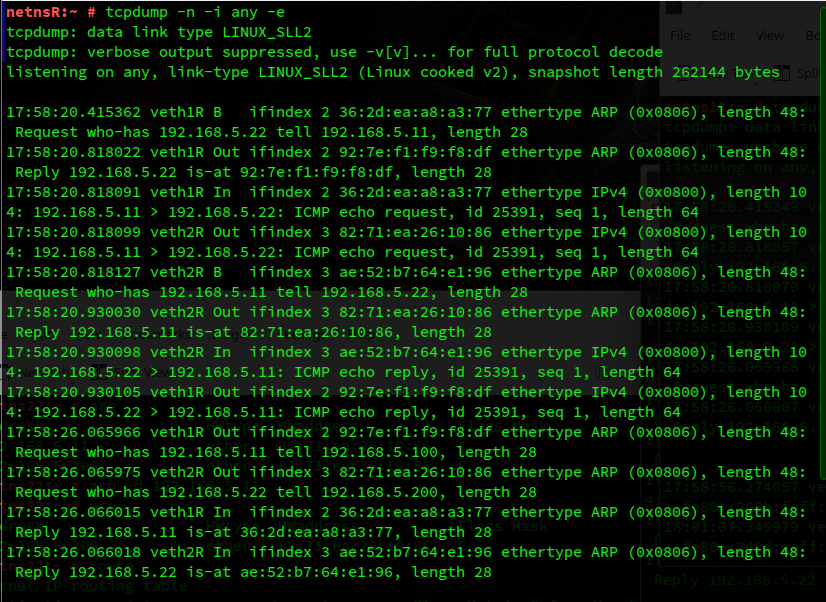

tcpdump shows the transfer of both ARP and ICMP packets:

Success! We see that the routes now do their job. Of course, it works also the other way round:

Detailed routes in all network namespaces – or Proxy ARP in the forwarding namespace?

Well, we understand that to link our L2-segments S1 and S2 seamlessly by a forwarding network namespace, we must establish detailed routes in all namespaces and for all NICs. The reason is that each namespace needs to know to which forwarding namespace and respective MAC it has to send its ICMP-request within an Ethernet-packet to. It simply needs the MAC of the routing device to use when sending ICMP-requests to segment S2. And else trust in the router’s ability to forward the request correctly.

The MAC required is of course that of veth1R (192.168.5.100), which is a border-NIC of segment S1. But to know that it must use 192.168.5.100 as a forwarder, netns12 requires a respective route with a gateway address.

To set up all of the required routes would be some effort growing quadratically with more members of both segments. Really? Can’t we have it a bit simpler?

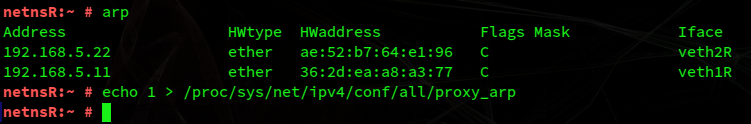

This is the point where Proxy ARP could come into the game. What if we deleted our specific routes in netns11, netns12, netns21 and netns22 again? And instead activated Proxy ARP in netnsR? From our considerations above we would expect some positive effect … and hopefully reduce the effort to a linear one in the central namespace …

But even if we find this to be true, we should keep in mind that activating ARP Proxy also can come with some risks …

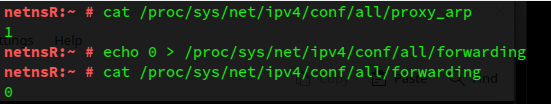

Experiment 4: ICMP-requests across the segments with activated Proxy ARP in the forwarding namespace netnsR

We activate Proxy ARP in netnsR by the command

echo 1 > /proc/sys/net/ipv4/conf/all/proxy_arp

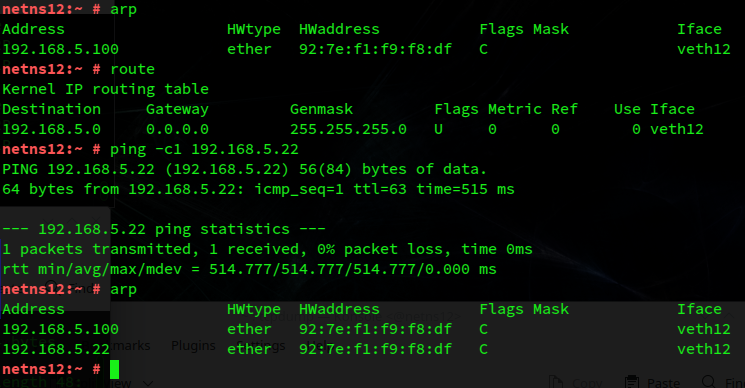

After removing the specific routes to target IPs in netns12 and netns22, we then can sent again a ping from netns12 to 192.168.5.22:

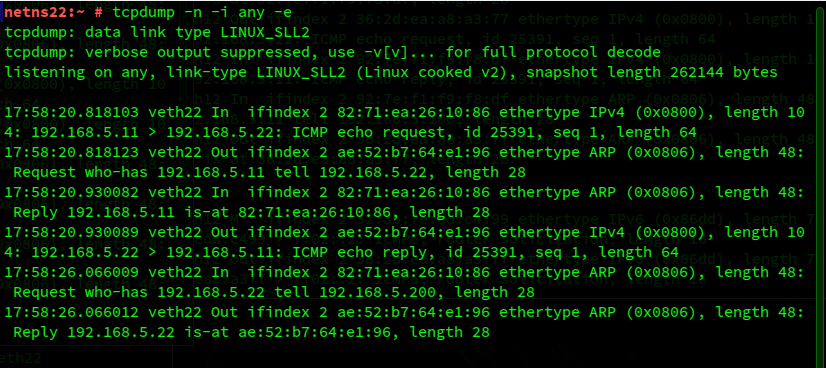

This works! netns12 receives a MAC to use to address its ICMP-request to – for forwarding.

Our routing namespace knows what to do with the ICMP-request and forwards it via veth2R to segment S2.

And also netns22 receives the required information and knows what to do – despite a missing detailed route:

Obviously, Proxy ARP helps us to compensate for the lack of detailed routes in our two L2-segments.

Pings between other namespaces also do work with activated Proxy ARP.

ARP Proxy requires activated forwarding in the routing network namespace

In a final experiment we deactivate forwarding again by

netnsR:~ # echo 0 > /proc/sys/net/ipv4/conf/all/forwarding

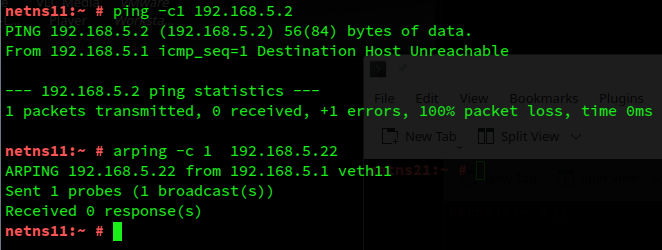

Afterward an ICMP-request, e.g. from netns11 to 192.168.5.22, fails and an ARP-request remains unanswered:

The ARP Proxy unfolds its effects only in a network namespace with forwarding enabled. Which makes sense in standard configurations: Why send MAC-addresses of an interface that is not able to forward?

Conclusion

In this post we coupled to otherwise separate L2-segments with all IPs belonging to the same IP-subnet by a forwarding network namespace. We have seen that detailed route information is required at least in the coupling namespace. Additionally activated ARP Proxy will provide the NICs and namespaces in the segments with MACs of the routing namespace. This makes a centralized setup possible, where we need to focus on the route definitions of the routing and forwarding namespace, only. The alternative was to equip all of the namespaces attached to the segments with detailed routes.

We confirmed once again that in virtual networks an ARP-request is bound to the virtual segment where the respective Ethernet broadcast is generated. The larger virtual segment we have created does not allow for cross-segment ARP. It works on layer 3 and beyond, but keeps up the separation on the Link layer. This is a major difference in comparison to direct segment coupling by veths or segment linking by a virtual Linux bridge.

In the next post

More fun with veth, network namespaces, VLANs – VI – veth subdevices for private 802.1q VLANs

we will return to virtual VLAN-scenarios. We will first study which configuration options we have to make for veth-devices VLAN-aware.

Links

PDF with commands for setting up the scenario with enabled forwarding and Proxy ARP in netnsR

create_netns_forward

Proxy ARP:

https://kinsta.com/knowledgebase/what-is-arp/

Enable Proxy ARP

https://ixnfo.com/en/how-to-enable-or-disable-proxy-arp-on-linux.html