I am a retired physicist with a hobby: Machine Learning [ML]. I travel sometimes. I would like to work with my ML programs even when I only have a laptop available, with inadequate hardware. One of my ex-colleagues recommended Google Colab as a solution for my problem. Well, I am no friend of the tech giants and for all they offer as “free” Cloud services you actually pay a lot by giving them your personal data in the first place. My general experience is also that you sooner or later have to pay for resources a serious project requires. I.e. when you want and need more than just a playground.

Nevertheless, I gave Colab a try some days ago. My first impression of the alternative “Paperspace” was unfortunately not a good one. “No free GPU resources” is not a good advertisement for a first time visitor. When I afterward tried Google’s Colab I directly got a Virtual Machine [VM] providing a Jupyter environment and an optional connection to a GPU with a reasonable amount of VRAM. So, is everything nice with Google Colab? My answer is: Not really.

Google’s free Colab VMs have hard limits regarding RAM and VRAM. In addition there are unclear limits regarding CPU/GPU usage over multiple sessions in an unknown period of days. In this post series I first discuss some of these limits. In a second post I describe a few general measures on the coding side of ML projects which may help to make your ML project compatible with RAM and VRAM limitations.

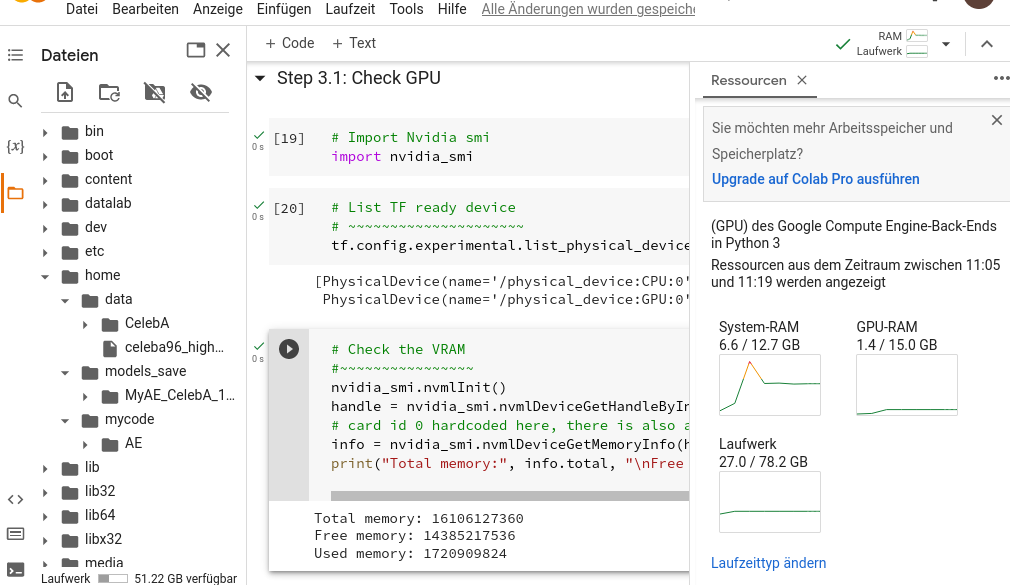

The 12.7 GB RAM limit for the RAM of free Colab VMs

Even for mid-size datasets you soon feel the 12.7 GB limit on RAM as a serious obstacle. Some RAM (around 0.9 to 1.4 GB) is already consumed by the VM for general purposes. So, we are left with around 11 GB. My opinion: This is not enough for mid-size projects with either big amounts of text or hundreds of thousands of images – or both.

When I read about Colab I found articles on the Internet saying that 25 GB RAM was freely available. The trick was to drive the VM into a crash by an allocation of too much RAM. Afterward Google would generously offer you more RAM. Really? Nope! This does not work any more since July 2020. Read through the discussion here:

Google instead wants you to pay for Google Pro. But as reports on the Internet will tell you: You still get only 25 GB RAM with Pro. So as soon as you want to do some serious work with Colab you are supposed to pay – a lot for Colab Pro+. This is what many professional people will do – as it often takes more time to rework the code than just paying a limited amount per month. I shall go a different way ..

Why is RAM consumption not always negative?

I admit: When I work with ML experiments on my private PCs, RAM seldom is a resource I think about much. I have enough RAM (128 GB) on one of my Linux machines for most of the things I am interested in. So, when I started with Colab I naively copied and ran cells from one of my existing Jupyter notebooks without much consideration. And pretty soon I crashed the VMs due to an exhaustion of RAM.

Well, normally we do not use RAM to a maximum for fun or to irritate Google. The basic idea of having the objects of a ML dataset in a Numpy array or tensor in RAM is a fast transfer of batch junks to and from the GPU – you do not want to have a disk involved when you do the real number-crunching. Especially not for training runs of a neural network. But the limit on Colab VMs make a different and more time consuming strategy obligatory. I discuss elements of such a strategy in the next post.

15 GB of GPU VRAM

The GPU offer is OK from my perspective. The GPU is not the fastest available. However, 15 GB is something you can do a lot with. Still there are data sets, for which you may have to implement a batch based data-flow to the GPU via a Keras/TF2 generator. I discuss also this approach in more detail in the next post.

Sometimes: No access to a GPU or TPU

Whilst preparing this article I was “punished” by Google for my Colab usage during the last 3 days. My test notebook was not allowed to connect to a GPU any more – instead I was asked to pay for Colab Pro. Actually, this happened after some successful measures to keep RAM and VRAM consumption rather low during some “longer” test runs the day before. Two hours later – and after having worked on the VMs CPU only – I got access to a GPU again. By what criterion? Well, you have no control or a clear overview over usage limits and how close you have come to such a limit (see below). And uncontrollable phases during which Google may deny you access to a GPU or TPU are no conditions you want to see in a serious project.

No clear resource consumption status over multiple sessions and no overview over general limitations

Colab provides an overview over RAM, GPU VRAM and disk space consumption during a running session. That’s it.

On a web page about Colab resource limitations you find the following statement (05/04/2023): “Colab is able to provide resources free of charge in part by having dynamic usage limits that sometimes fluctuate, and by not providing guaranteed or unlimited resources. This means that overall usage limits as well as idle timeout periods, maximum VM lifetime, GPU types available, and other factors vary over time. Colab does not publish these limits, in part because they can (and sometimes do) vary quickly. You can relax Colab’s usage limits by purchasing one of our paid plans here. These plans have similar dynamics in that resource availability may change over time.”

In short: Colab users get no complete information and have no control about resource access – independent of whether they pay or not. Not good. And there are no price plans for students or elderly people. We understand: In the mindset of Google’s management serious ML is something for the rich.

The positive side of RAM limitations

Well, I am retired and have no time pressure in ML projects. For me the positive side of limited resources is that you really have to care about splitting project processes into cycles for scaleable batches of objects. In addition one must take care of Python’s garbage collection to free as much RAM as possible after each cycle. Which is a good side-effect of Colab as it teaches you to meet future resource limits on other systems.

My test case

As you see from other posts in this blog I presently work with (Variational) Autoencoders and study data distributions in latent spaces. One of my favorite datasets is CelebA. When I load all of my prepared 170,000 training images into a Numpy array on my Linux PC more than 20 GB RAM are used. (And I use already centered and cut images of a 96×96 pixel resolution). This will not work on Colab. Instead we have to work with much smaller batches of images and work consecutively. From my image arrays I normally take slices and provide them to my GPU for training or prediction. The tool is a generator. This should work on Colab, too.

One of my neural layer models for experiments with CelebA is a standard Convolutional Autoencoder (with additional Batch Normalization layers). The model was set up with the help of Keras for Tensorflow 2.

First steps with Colab – and some hints

The first thing to learn with Colab is that you can attach your Google MyDrive (coming with a Google account) to the VM environment where you run your Jupyter notebooks. But you should not interactively work with data files and data sets on the mounted disk (on /content/MyDrive on the VM). The mount is done over a network and not via a local system bus. Actually transfers to MyDrive are pretty slow – actually slower than what I have experienced with sshfs-based mounts on other hosted servers. So: Copy singular files to and from MyDrive, but work with such files on some directory on the VM (e.g. under /home) afterward.

This means: The first thing you have to take care of in a Colab project is the coding of a preparation process which copies your ML datasets, your own modules for details of your (Keras) based ML model architecture, ML model weights and maybe latent space data from your MyDrive to the VM.

A second thing which you may have to do is to install some helpful Python modules which the standard Colab environment may not contain. One of these routines is the Nvidia smi version for Python. It took me a while to find out that the right smi-module for present Python 3 versions is “nvidia-ml-py3”. So the required Jupyter cell command is:

!pip install nvidia-ml-py3

Other modules (e.g. seaborne) work with their standard names.

Conclusion

Google Colab offers you a free Jupyter based ML environment. However, you have no guarantee that you always can access a GPU or a TPU. In general the usage conditions over multiple sessions are not clear. This alone, in my opinion, disqualifies the free Colab VMs as an environment for serious ML projects. But if you have no money for adequate machines it is at least good for development and limited tests. Or for learning purposes.

In addition the 12 GB limit on RAM usage is a problem when you deal with reasonably large data sets. This makes it necessary to split the work with such data sets into multiple steps based on batches. One also has to code such that Python’s garbage collection can work on small time periods. In the next post I present and discuss some simple measures to control the RAM and VRAM consumption. It was a bit surprising for me that one sometimes has to manually care about the Keras Backend status to keep the RAM consumption low.

Links

Tricks and tests

https://damor.dev/your-session-crashed-after-using-all-available-ram-google-colab/

https://github.com/ googlecolab/ colabtools/ issues/253

https:// www.analyticsvidhya.com/ blog/ 2021/05/10-colab-tips-and-hacks-for-efficient-use-of-it/

Alternatives to Google Colab

See a Youtube video of an Indian guy who calls himself “1littlecoder” and discusses three alternatives to Colab: https:// www.youtube.com/ watch?v=xfzayexeUss

Kaggle (which is also Google)

https://towardsdatascience.com/ kaggle-vs-colab-faceoff-which-free-gpu-provider-is-tops-d4f0cd625029

Criticism of Colab

https://analyticsindiamag.com/ explained-5-drawback-of-google-colab/

https://www.reddit.com/ r/ GoogleColab/ comments/ r7zq3r/ is_it_just_me_ or_has_google_colab_ suddenly_gotten/

https://www.reddit.com/ r/ GoogleColab/ comments/ lgz04a/ regarding_ usage_limits_ in_colab_ some_common_sense/

https://github.com/ googlecolab/ colabtools/ issues/1964

https://medium.com/ codex/ can-you-use-google-colab-free-version-for-professional-work-69b2ba4392d2