My wife and I use the expression “Windust” for the Windows operative system. A “dust” is a somewhat stupid person in Norwegian. I will use this expression below.

My wife has a rather old laptop (Terra 1541 Pro). I has survived Windust 7 up to the latest Windust 10. It was the only one of our laptops with a full Windows installation. We used it for communication with some customers that had Windows, only. Skype, Teams are the keywords.

During the last Windust 10 updates the laptop got slower and slower. In addition, according to MS, the laptop does not qualify for Windows 11. A neighbor of us had the same problem. What do Windust users (as our neighbor) do in such situations? They either try a full Windows (10) installation from scratch – and/or buy themselves a new laptop. It is so typical and so “dust” …

Revival with Linux?

My wife and I are retired persons. We no longer need to care about customers who depend on Windust. For the few remaining ones a small virtual installation under KVM on a workstation is sufficient for all practical purposes. So, we thought: This old laptop is a typical case for a revival cure with Linux.

A good friend of us organized a new rechargeable battery block for us and we ordered a 1 TB SSD in addition. The screen has a 1920×1080 resolution, the RAM is 16GB. Graphics is Intel based. All in all, for non-professional purposes it is a well equipped laptop. We therefore decided to finally say good bye to our last Windows installation which slowed down the laptop.

Opensuse Leap 15.5 installation

Yesterday, I installed Opensuse Leap 15.5 on the laptop. From an ISO-image on DVD. No problems occurred during the installation process.

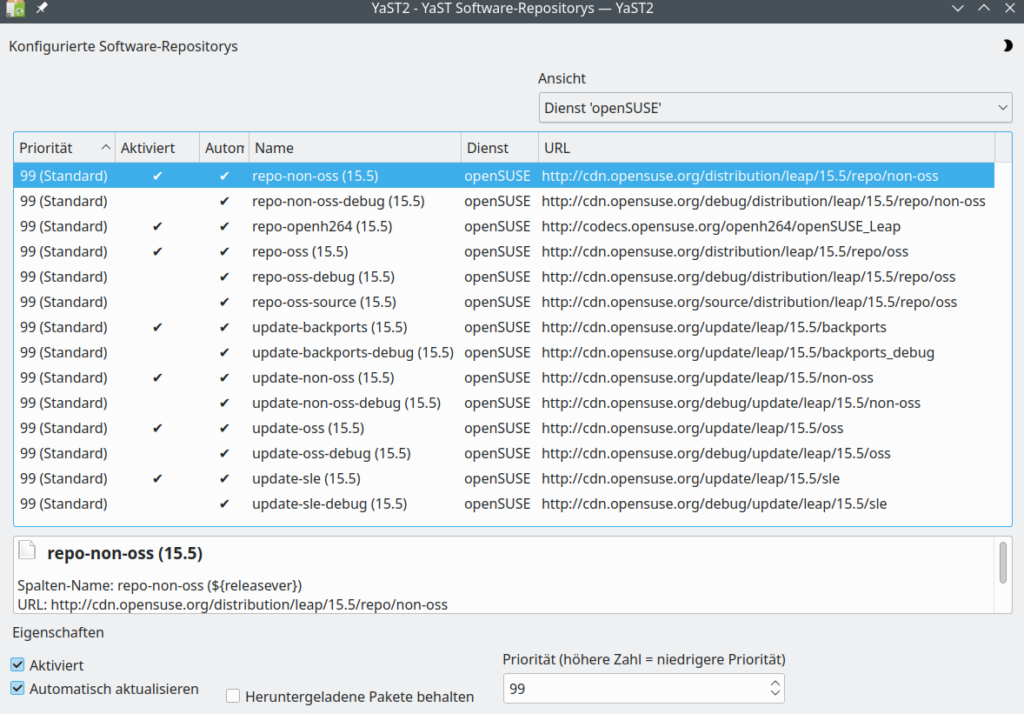

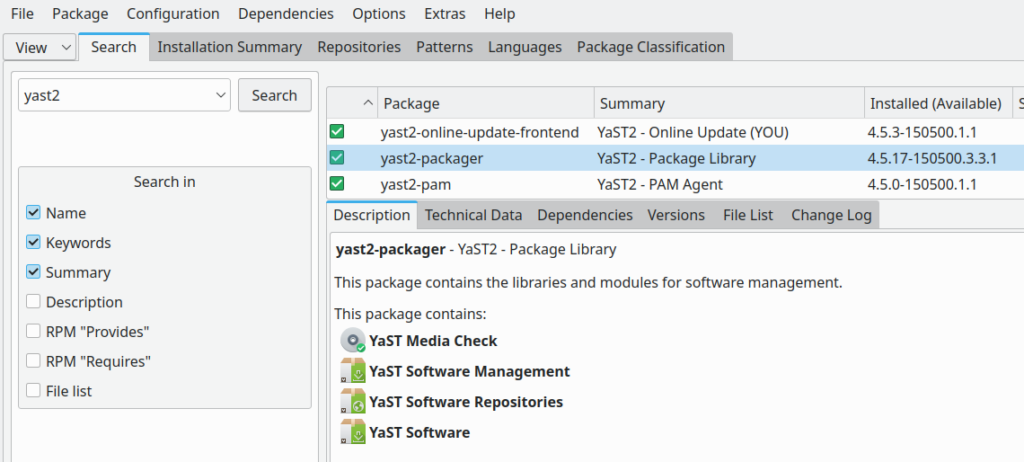

[At least as long as I did not try to add special SW repositories with YaST2. Opensuse has build a remarkable bug into Yast2’s software(= RPM) management. More about this in another post.]

The good news is: The laptop works with Leap 15.5 and KDE like a charm. And it is now less noisy (ventilation!) than with Windows 10. All special keys for controlling screen brightness and speaker levels work. No problem to attach Kontact (with Kmail) and Thunderbird to our IMAP-server. Multimedia programs like Clementine do their work. Our standard browsers (FF, Chromium, Opera), too. Yesterday we watched the Norwegians handball team play against Slovenia during the EM in Germany via a live stream on Firefox on this laptop and on an HDMI-attached HD TV that extended the laptop screen. Automatically recognized and after answering a question, in which direction we wanted to extend, automatically activated.

After a short configuration network connections can be set up via Ethernet cable, if we want to work in our inner LAN network with Linux systems, only. These systems are configured via firewalls to trust each other partially and with respect to certain services. Internet connection happens via routing through a perimeter firewall. Alternatively my wife can directly connect to a WLAN of our router, when she just wants to access the Internet. Networkmanager, priorities for automatic connections and sensing a plugged-in network cable are used to make an adequate automatic choice of the system: If the Ethernet cable is plugged in a cable based connection is used, only. If the cable is unplugged WLAN is activated automatically. And vice versa.

A small script for avoiding double connections (LAN and WiFi) can be added to the “/etc/NetworkManager/dispatcher.d”. This is discussed in “man nmcli-examples” and at [1]. I recommend all users of Linux laptops with Network manager to study the little script:

#!/bin/bash

export LC_ALL=C

enable_disable_wifi ()

{

result=$(nmcli dev | grep "ethernet" | grep -w "connected")

if [ -n "$result" ]; then

nmcli radio wifi off

else

nmcli radio wifi on

fi

}

if [ "$2" = "up" ]; then

enable_disable_wifi

fi

if [ "$2" = "down" ]; then

enable_disable_wifi

fi

Do not forget to give the script executable rights. Works perfectly.

Do we miss any Windows SW on the old laptop?

Straight answer: No. My wife has used GIMP, Gwenview, showFoto (with ufraw) and Inkscape for image manipulations for years. GIMP and Inkscape also on Windust. We both use Libreoffice Draw for drawings and simple graphics. Libreoffice (with Writer, Calc, Impress) has been a sufficient and convenient replacement for MS Office already for many years. For creating tax reports we use LinHaBU. The little we do with Web development these days can be done with Eclipse. Linux offers a variety of FTP-tools. All in all our needs are covered and our requirements very well fulfilled.

The old laptop will get a hopefully long 2nd life with Linux at our home in Norway.

Some security considerations

One thing that may be important for professional people: You may want to have a fully encrypted system. This can, of course, be achieved with LUKS. And in contrast to an often heard argument it is not true that this requires an unencrypted and therefore insecure “/boot”-partition. I have written articles on setting up a fully encrypted Linux system with the help of LUKS on laptops in this blog.

TPM offers options to detect HW-modifications of your system. See e.g. [5]. This is certainly useful. But, as you have an old laptop with Windust, you probably have lived with many more and SW-related risks regarding your security for a long time. So, no reason to forget or replace your laptop by a new one. Most Windust users that I know do not even have a Bitlocker encryption active on their systems.

While the BitLocker encryption of Windust may require TPM 2.0 to become safe again (unsafe SHA-1 support in TPM 1.2), we can gain a high level of security regarding disk encryption on Linux with LUKS alone. One can even find some arguments why TPM (2.0) may not make fully encrypted Linux laptops more secure. Opensuse and other distributions do support TPM 2.0 and secure boot. So, the question is not whether some Linux distribution actively supports TPM, but whether we really need or want to use it. See e.g. the discussion and warnings here.

In my private opinion, the old game of Windows supporting the HW-industry and vice versa just goes into a new cycle and the noise about HW- and firmware based attacks ignores at least equally big risks regarding SW (OS and applications).

Even under security considerations I see no major reason why one should not use older laptops with a full LUKS encryption. A major difference is that we do not put secrets and keys for an automatic decryption into a TPM-chip which could have backdoors. A LUKS setup is a bit more inconvenient than Bitlocker with TPM, but with all partitions encrypted (no separate /boot-partition!) not really un-safer. The big advantage of LUKS full encryption without TPM is: No knowledge of the key passphrase, no decryption. But this is all stuff for a more detailed investigation. A fully LUKS encrypted Linux setup would in any case probably be significantly safer than an old Windust installation with Bitlocker and TPM 1.x.

If your security requirements are not top level most reasons not to use old laptops are not valid in my opinion. So, give Linux a try on your old machines before throwing them away.

Conclusion and some preliminary security considerations

Old laptops can remain a valuable resource – even if they are not fit for Windows 11 according to MS. Often enough they run very well under Linux. If you have major security requirements consider a full disk encryption with LUKS. This may not be as safe as LUKS with TPM 2.0 and a two-phase-authentication, which you would have to take care of during setup, but it may be much safer as the Windust installation you have used before.

And do not forget: TPM is no protection against attacks which use vectors against SW-vulnerabilities.

Links

[1] https://unix.stackexchange.com/ questions/ 346778/ preventing-double-connection-over-wlan0-and-usb-0-in-network-manager-gnome

[2] TPM and Arch Linux: https://wiki.archlinux.org/ title/ Trusted_Platform_Module

See also the warnings in

https://wiki.archlinux.org/ title/ User:Krin/ Secure_Boot, _full_disk_encryption, _and_TPM2_unlocking_install

[3] Bruce Schneier on TPM attacks: See https://www.schneier.com/tag/tpm/ and

https://www.schneier.com/ blog/ archives/2021/08/ defeating-microsofts-trusted-platform-module.html

[4] TPM 2.0 vulnerabilities: https://www.tomsguide.com/ news/ billions-of-pcs-and-other-devices-vulnerable-to-newly-discovered-tpm-20-flaws

[5] A positive look on TPM from Red Hat: https://next.redhat.com/ 2021/05/13/ what-can-you-do-with-a-tpm/