KVM/Qemu based virtual machines [VMs] are a nice and comfortable part of my life with Linux. I use them both as dedicated entities for various services on a server host and as experimental test or work environments on my Linux workstation. Whereas the access to server VMs was dominated by SSH connections and CLI interaction so far, working with VMs on my PC was and is is characterized by graphical user-interfaces.

In the latter case we speak of desktop-virtualization: Connections to the virtual guests are set up locally via sockets (or via a network port over device “lo”) on the very same PC or workstation which also serves as KVM host. Supported by a client/server model for graphics, KVM/Qemu provides multiple capable methods for the local display of the graphical output of a VM. And by graphical output I mean a desktop environment as KDE, XFCE or Gnome.

For KVM/Qemu the combination of the so called

- “Spice display” and the Spice protocol

- with drivers for potent virtual video devices (as QXL or Virtio)

offers a very convenient solution for the presentation of a VM’s graphical desktop with high performance – which, in my experience, is considerably faster than most VNC variants.

In addition:

With the help of certain Spice clients one can access a local VM via a desktop display which extents over up to four “screens“. All of these “screens” are displayed within scalable windows of your preferred basic desktop environment on your Linux machine – e.g. KDE, LXDE. This means that you can graphically work with a full fletched KDE desktop of the VM displayed in multiple windows within a KDE or Gnome environment on your local KVM/Qemu host (e.g your Linux workstation).

Can we get this comfort also with remote connections over a LAN? With encryption tunnels? The answer is yes – and related Qemu and Libvirt configurations are the main topics of this article series.

Why do I write about these subjects, at all? Recently, I needed to work with a KVM/Qemu guest remotely on an Opensuse Leap 15.2 server. I wanted to use a SSH tunneled connection with password authentication for a special graphical Spice client, namely “virt-viewer“. Being a bit naive, I ran into some major trouble. This in turn forced me to look closer at different possibilities for remote connections to KVM/Qemu based VM. Unfortunately, I found Opensuse’s documentation on Spice sparse – to say the best. But Spice gets especially interesting and somewhat complex with remote access methods, encryption and authorization.

In this article series I discuss some of the multiple configuration options I have experimented with. I restrict myself to Spice based solutions with the clients “virt-viewer“, “remote-viewer” and also “virt-manager“. In combination with SSH, TLS. I hope my experiences help other Opensuse Leap users to set up convenient remote solutions.

After giving some documentation links, I start with an overview over some selected remote access scenarios which are available. They are the most important ones and are all based on network ports. But, in the coming posts I will cover local access scenarios based on Unix sockets, too. And their combination with SSH.

Limitations

Unfortunately, I have to reduce expectations of readers who are out for “multi-user remote desktop solutions”. This is not what the present Spice solutions with the named clients will offer you. See below.

A topic which I will not address in the forthcoming articles is the setup of a local VM with OpenGL acceleration on the graphics device of the KVM host. With Nvidia cards and drivers this is

unfortunately a mess – and it is Nvidia’s fault, in my opinion. Very similar, by the way, to Nvidia’s EGL-based solution attempt for Wayland. All of this because of their own OpenGL handling and their declined support for GBM. So, let us not go there to avoid frustration …

Basic preparations of a KVM/Qemu VM: Documentation and guides?

I assume that you are already familiar with the basic set up and configuration of a KVM/Qemu based virtual machine – e.g. with virsh or virt-manager. If not, you will find valuable hints for Opensuse Leap systems in SuSE’s “Virtualization Guide”

However, as said above, Opensuse’s documentation on virtualization does not give you much information about Spice. We only get a glimpse of it at the sections on “virsh” and “Qemu“. The information is rather spurious there; one doesn’t get a broader context. You may get a better understanding by reading introductory pages on the site “https://www.spice-space.org/“, especially the user manual

https://www.spice-space.org/spice-user-manual.html.

Regarding special configuration settings of a VM, see https://libvirt.org/formatdomain.html.

Spice with multiple screens requires a non default configuration of the VM’s QXL device

For a basic Spice setup there is not too much to do; “just” choose Spice for the display-settings of your VM and configure the VM’s QXL graphics device. Such a device corresponds to something like a virtual graphics card. Well, it sounds easy, but when I tried it myself, I had to experiment. To help others a bit: I have already discussed in depth how we can configure a KVM/Qemu guest and its QXL device such that it supports multiple scalable high-resolution screens for Spice clients. See the following articles:

KVM/qemu mit QXL – hohe Auflösungen und virtuelle Monitore im Gastsystem definieren und nutzen – I

KVM/qemu mit QXL – hohe Auflösungen und virtuelle Monitore im Gastsystem definieren und nutzen – II

KVM/qemu mit QXL – hohe Auflösungen und virtuelle Monitore im Gastsystem definieren und nutzen – III

KVM/qemu mit QXL – hohe Auflösungen und virtuelle Monitore im Gastsystem definieren und nutzen – IV

These posts were written in German; but with a little bit of goodwill one can understand the configuration settings and related commands.

An alternative today is to use a “virtio” video device in the VM’s configuration. I shall come back to this point in a later article.

What I did not cover in my previous posts on Spice was the broader spectrum of access options to a KVM/Qemu-based VM with libvirt/Space clients – both regarding local and remote connections. I only discussed how to use the “remote-viewer” client locally – despite the fact that its name already indicates something else. What I also disregarded in my articles in 2017 is the fact that

there are various (remote) access methods – direct ones and some through a libvirt-layer on the KVM-host. As always with Linux there are multiple ways to achieve your objectives :-).

However, when we use Spice instead of VNC we speak about a “one person” or a “one seat” solution.

Spice and libvirt => multiple methods to access exactly one graphical console of a KVM/Qemu based VM

What you should be aware of when you think of using Spice for a graphical access to a KVM/Qemu VM is the following:

1. The Spice protocol and the Spice display for KVM/QEMU based VMs do NOT provide a multi-user service for graphical remote terminals or remote desktop displays – at least not in their default configuration.

According to what I have read, anything in this direction still seems to be experimental. Instead:

2. We access a data stream describing the graphical contents of one or multiple screens of a console which is virtually “attached” to the VM.

Let us call this device a multi-screen Spice console of the VM.

The Spice console solution is fully integrated into the QEMU-emulator. The console screen data and related sound can, however, also be displayed and played on a remote Linux system. And here we face a point which makes the user’s life both convenient and complex at the same time :

3. The Spice console of a VM can be accessed by various protocols or protocol combinations. The Spice console is fully integrated with Qemu and directly accessible there via a (network) socket, but it is in addition accessible via libvirt components. This leads to a variety of connectivity options.

These aspects can be underestimated: Remote access for multiple screen requires sufficient band-width of the network. The second point excludes the application of Spice for multi-user scenarios. The third point opens up a range of local and remote access possibilities – some are more complicated to configure, some are more secure than others. Some options are deactivated in a default libvirt installation (not only on Opensuse Leap systems).

The most important connection options for standard libvirt/spice clients are summarized in the following schematic drawing.

The Spice console of a KVM/Qemu VM

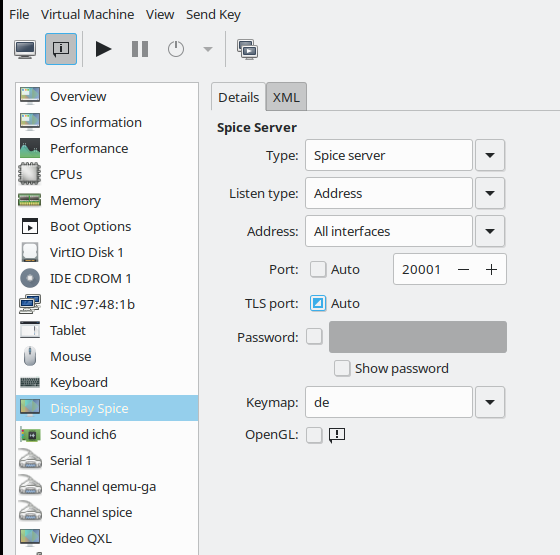

The graphics shows a Linux KVM host at its center. KVM supports the VM emulator QEMU in the Linux kernel – thus making QEMU fast. Two virtual machines VM1 and VM2 are shown. VM1’s configuration includes a Spice (console) display – e.g. set up according to the following excerpt of a configuration example for a VM (done with virt-manager):

Note that the configuration item is called “display” in virt-manager (and “graphics” in the XML-configuration of a VM). I think that in the case of Spice “Console” would have been a better name. I should also warn you that the displayed configuration may lead to security risks – if not accompanied by protective measures.

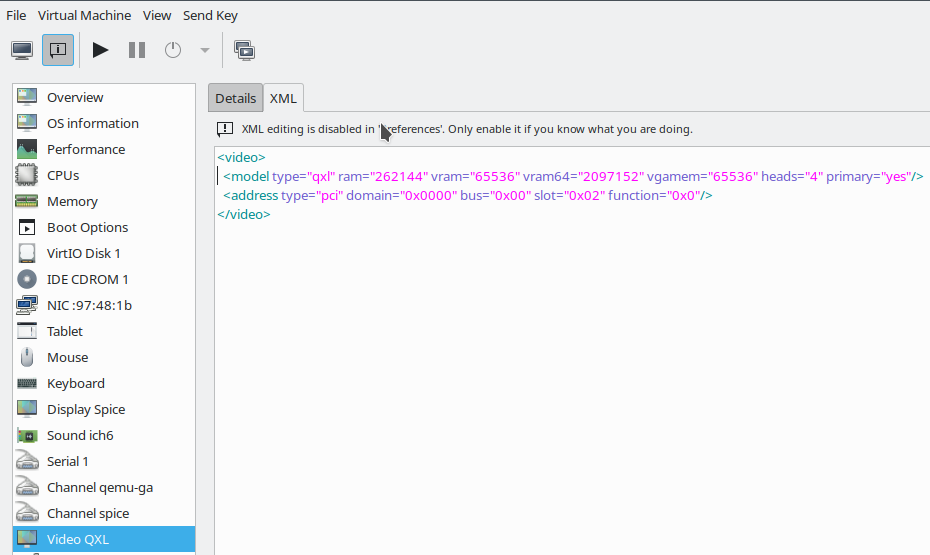

Note also that “virt-manager” does not allow for a detailed QXL-configuration required for multiple screens on the displayed dialog. But you can edit the XML-files of the VM for this purpose via the second tab (or an editor;

you find the XML-files for VMs in the directory “/etc/libvirt/qemu” on Opensuse Leap systems):

The QXL configuration displayed here is an example – you may choose other parameters. (Or test out a “virtio” device; see a later article).

“One seat” console

In the lower right part of the first sketch above I have tried to express that a graphical Spice console corresponds to a “one console with one seat” situation. The console is only available for exactly one (authorized) user at a time. Note:

Any user having sufficient access rights may any time claim the console and throw you off the seat in front of the graphical console screen, if you do not secure the access to the console by a password (or other measures)!

We did not do this in the configuration example above; see the empty field for the password. I quote from the documentation:

“Spice does not support multiple connections to the same QEMU instance by default. So anybody who will connect to the same host and port can simply take over your session. You can solve this problem by using ticketing.” (see: https://www.spice-space.org/spice-user-manual.html)

“Ticketing” means setting a password to access the VMs console screen; but still anyone knowing the password could claim the console – a password is no real solution for a multi-user situation. To say it clearly:

If your objective is to establish something like a multi-user graphical access to a KVM/QEMU-based virtual machine with a full desktop display for each user (i.e. a “remote desktop solution”), then think about something like a headless graphical server configuration of the KVM guest. On a Linux VM, you can achieve this by either implementing a X2GO server, a commercial NoMachine server, a VNC server-variant or even XRDP. Some of these solutions can easily be combined with SSH.

And do not forget: In a LAN with sufficient bandwidth “ssh -X” may already serve most practical purposes.

But in many cases a local or remote “one seat situation” with a full graphical desktop may be sufficient. Now, let us look at the other parts of the drawing.

Different access options to the Spice console of a KVM/Qemu-based VM

There is a multitude of options to connect to the Spice console (https://www.spice-space.org/spice-for-newbies.html). We shall describe them briefly in this first article. In forthcoming articles we shall have a closer look at each of them.

Option 1: Simple connection with “remote-viewer” via a Spice (network) port

The Qemu-emulator is able to handle requests over the Spice protocol on its own, i.e. without the invocation of special libvirt-support. The Spice solution offers connections to the console either on the basis of a pure Unix socket (local solution) or a network port (remote solution).

A network oriented solution, which can be realized with the Spice client “remote-viewer” is depicted on the left side of our central sketch:

You access the Spice console of a specific VM directly via a specific network port. Spice ports have to be configured independently for each of the VMs. I.e., if you have 2 VMs whose Spice consoles you want to access independently then you need to define two different ports for the Spice consoles. Actually, you won’t be able to start a VM which has the same port associated with its Spice console as another already running VM.

nProperties of a remote-viewer connection over a network port:

- Default port? The standard Spice port is 5900 (which is also the port used by VNC). In the example displayed above I instead used port 20001. (Do not forget to open your firewalls for your chosen ports!)

- Security by encryption? The connection to the port is not secured by any encryption. However, it is possible to define an additional specific TLS-secured port. We shall cover spice + TLS protocol combinations in another article.

- User authentication on the KVM server host? Can optionally be achieved via SASL.

- Preferred Spice client? The Spice client which uses the Spice protocol directly and without libvirt is “remote-viewer“.

- Works locally and/or remotely? Both.

Some additional hints:

In some old articles or posts on the Internet you may find descriptions of “virt-viewer” together with URIs like “spice://host:5900”. This does not work any more – at least on my systems.

By setting proper attributes to the VM, we can in addition enforce that it only accepts secured connections. For those who are eager to experiment already: Where do we learn something about such machine specific settings in XML-format? The answer is:

https://libvirt.org/formatdomain.html

Option 2: Connection with “virt-viewer” or “virt-manager” via libvirtd and a defined TCP port

Besides “remote-viewer” there is another Spice client called “virt-viewer“. This client is of special use when you work on a KVM host with multiple VMs. What some admins do not know is that it can be used via a simple socket with a specific TCP-port. You do not need SSH or TLS, if you can disregard security. This corresponds pretty much to what we got with remote-viewer and a Spice port. The difference is that we access the socket via the mediation of libvirtd components:

virt-viewer requires URIs which specify the target hypervisor for the libvirtd daemon – in our case “qemu”. Such URIs differ from URIs used for remote-viewer as they always contain a two fold protocol definition.

We shall apply such URIs in a later article. In addition this access method requires a special (non-default) configuration of the libvirt-daemon. Among other things, you, of course, have to define the port. The ability to listen to an insecure TCP port is normally deactivated in the libvirtd configuration; we have to activate it manually. This is something we shall have a closer look at.

Unencrypted access to the Spice console via a special libvirtd port may be a useful solution for KVM guests sitting in a secured physical LAN-segment or behind a virtual bridge/firewall in a virtualized LAN-segment of the KVM host.

Properties of a virt-viewer connection via a TCP port

- Default port? 16509.

- Security by encryption? The connection to the port is not secured by any encryption. However, we have other options for virt-viewer to connect to the VM via SSH or TLS secured connections. See below.

- Preferred Spice client?: virt-viewer (You cannot provide a valid URI to remote-viewer this way). However, this connection option also works with “virt-manager” (and its included graphical access to the Spice console).

- User authentication? Can be achieved via SASL.

- Works

locally and/or remotely? Both.

on the KVM host.

Note that the access to libvirtd must be allowed for the user invoking the connection! This can be configured on Opensuse Leap systems by some policy and/or libvirtd settings.

Option 3: Connection with “virt-viewer” or “virt-manager” via SSH and libvirtd

A very convenient method to get a secure connection with Linux is to use SSH encryption. Therefore, we are not astonished to find that “virt-viewer” can be combined with SSH. This connection method also requires a special URI-format. And again: access to libvirtd must be allowed for the user using this type of connection.

Properties of a virt-viewer connection via a SHH port:

- Default port? 22 or otherwise the defined SSH-port on your system.

- Security by encryption? SSH.

- Preferred Spice client?: virt-viewer (You cannot provide a valid URI to remote-viewer this way). However, this connection option also works with “virt-manager” (and its included graphical access to the Spice console).

- User authentication? Via SSH (preferably public-key authentication)

- Works locally and/or remotely? Both.

Both options 2 and 3 work with virt-manager, too. However, virt-manager does not provide you with a multi-screen access to the Spice console.

Note that a combination of SSH and remote-viewer is also possible; but then you would open a “SSH -X” connection first and run remote-viewer on the KVM/Qemu host and not on the network client system. I will present such a solution in a later article, too.

Conclusion

The Spice console of KVM/Qemu offers access to a graphical desktop of a virtual machine guest. Spice clients as “remote-viewer”, “virt-viewer” and “virt-manager” can be used locally and remotely. The first two clients offer multiple screens – but all clients provide a one seat solution, only. This may, however, be sufficient for many use cases. There is a variety of local and remote access methods. Connections can be secured by TLS or SSH encryption.

In the next article

I shall have a closer look at a local connection from the “remote-viewer” client to the Spice console of a VM.

Links

Spice console and Spice protocol

https://linuxhint.com/ configure_ spice_server_ debian_10/

https://www.spice-space.org/ spice-user-manual.html

https://www.spice-space.org/ spice-for-newbies.html

Activate spice

https://www.linux-kvm.org/page/SPICE

https://linux-blog.anracom.com/2017/08/15/kvmqemu-mit-qxl-hohe-aufloesungen-und-virtuelle-monitore-im-gastsystem-definieren-und-nutzen-iv/

https://octetz.com/docs/2020/2020-05-06-linux-hypervisor-setup/

Nvidia 🙁

https://www.phoronix.com/scan.php?page=news_item&px=XDC2016-Device-Memory-API

Further

articles in this series

KVM/Qemu VMs with a multi-screen Spice console – VIII – VM and user specific restrictions for remote-viewer connections – iptables and sudo

KVM/Qemu VMs with a multi-screen Spice console – VII – remote-viewer, qemu and SASL authentication

KVM/Qemu VMs with a multi-screen Spice console – VI – remote access with remote-viewer and TLS encryption

KVM/Qemu VMs with a multi-screen Spice console – V – remote access via remote-viewer, a network port and a SSH-tunnel

KVM/Qemu VMs with a multi-screen Spice console – IV – remote access via SSH, remote-viewer and a Unix socket

KVM/Qemu VMs with a multi-screen Spice console – III – local access with remote-viewer via a Unix socket

KVM/Qemu VMs with a multi-screen Spice console – II – local access with remote-viewer via a network port