Sometimes you may need to analyze the behavior and responses of a web-server or a REST service to certain requests. And sometimes you are restricted to the command line of a Linux system (e.g. during penetration testing). Then you have to type and send HTTP commands in a direct manner. While this is trivial with telnet and HTTP-commands via n unencrypted connection on port 80, you must use a tool like openssl for HTTPS-servers using TLS tunnels.

A quick search on the Internet will show you that you should be able to use “openssl” on a Linux system in the following form:

openssl s_client -connect YOUR_TARGET_WEB_DOMAIN:443

Or – if you do not want to look at certificates and related CA chains in detail – with an additional option “-quiet”:

openssl s_client -quiet -connect YOUR_TARGET_WEB_DOMAIN:443

YOUR_TARGET_WEB_DOMAIN has to be replaced, of course, by a valid URI. For restricting the encryption to TLS V1.2 you would instead use:

openssl s_client -quiet -tls1_2 -connect YOUR_TARGET_WEB_DOMAIN:443

For some servers an additional option “-ign_eof” can be helpful: This hinders a connection to directly close when an “end of file” [EOF] may be reached (during a response). Meaning: The response will not be shown in some cases. The option “-quiet” triggers a “-ign_eof” behavior implicitly. But keep in mind that this option does not hinder any timeouts on the connection imposed by the server.

A problem with the interactive “openssl s_client” command-line on Linux systems

After entering the above commands at the command prompt of a Linux shell (e.g. bash) you will first see some information regarding the connection handshake and the establishment of the encryption tunnel. Then end up on a line where you can interactively enter HTTP commands. You expect to successfully enter commands in the following way:

We type “GET / HTTP/1.1.” (without the quotes) => we press the “ENTER”-key => we get a new line => we type “Host: YOUR_TARGET_WEB_DOMAIN” (without the quotes) => we press the “ENTER”-key twice.

You may try this sequence with “google.com”. This will work! You get, however, an information that the document has been moved to “www.google.com”. But at the new address everything is working, too.

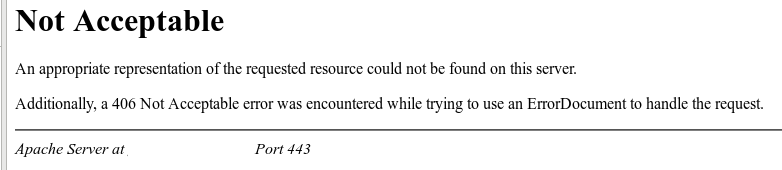

So far so good! But let us try the given recipe with another domain: www.debian.org. Using the command line within “s_client” then leads to an error:

.....

Start Time: 1609195616

Timeout : 7200 (sec)

Verify return code: 0 (ok)

Extended master secret: no

Max Early Data: 0

---

read R BLOCK

GET / HTTP/1.1

HTTP/1.1 400 Bad Request

Date: Mon, 28 Dec 2020 22:47:04 GMT

Server: Apache

X-Content-Type-Options: nosniff

X-Frame-Options: sameorigin

Referrer-Policy: no-referrer

X-Xss-Protection: 1

Strict-Transport-Security: max-age=15552000

Content-Length: 291

Connection: close

Content-Type: text/html; charset=iso-8859-1

<!DOCTYPE HTML PUBLIC "-//IETF//DTD HTML 2.0//EN">

<html><head>

<title>400 Bad Request</title>

</head><body>

<h1>Bad Request</h1>

<p>Your browser sent a request that this server could not understand.<br />

</p>

<hr>

<address>Apache Server at www.debian.org Port 443</address>

</body></html>

closed

You do not even get a chance to enter the “Host: …” line!

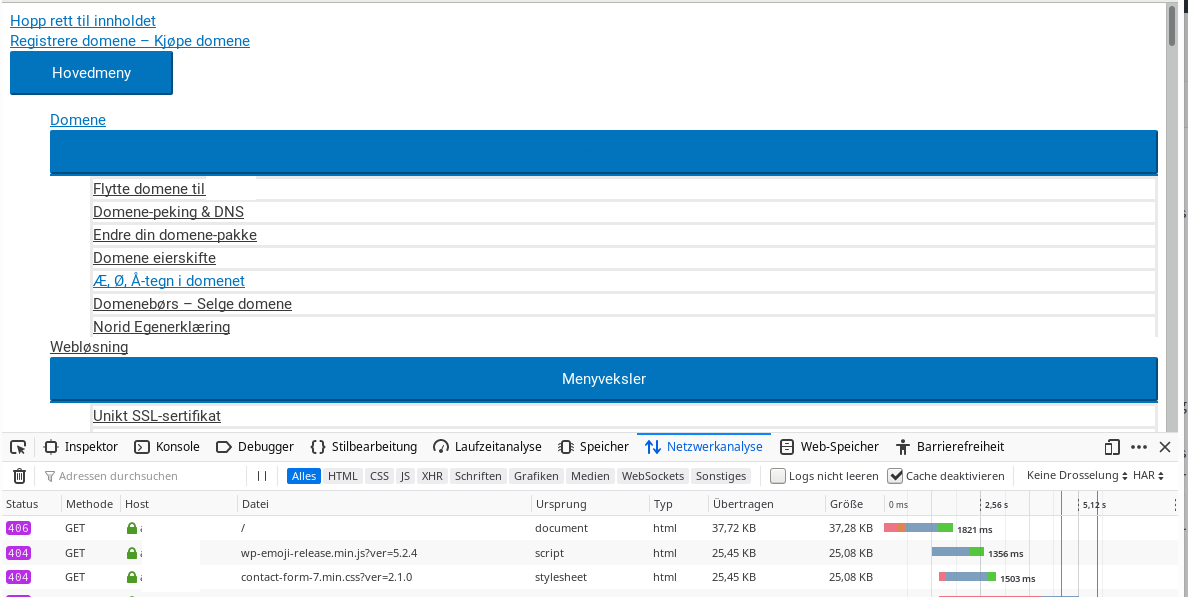

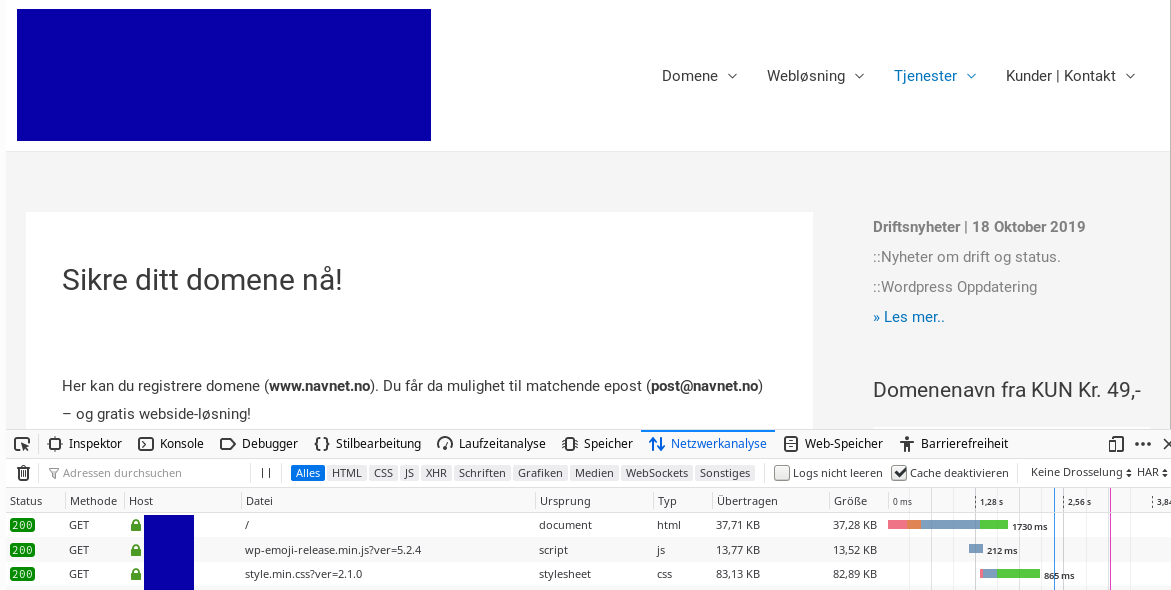

The same is true for other servers – as the one supporting e.g. one of my own domains “anracon.de”.

A simple trick shows what the correct request format is and that it works …

Let us use a

simple trick with echo and a pipe on the Linux shell:

myself@mytux:~> (echo -ne "GET / HTTP/1.1\r\nHost: www.debian.org\r\n\r\n") | openssl s_client -tls1_2 -quiet -connect www.debian.org:443

This leads to

depth=2 O = Digital Signature Trust Co., CN = DST Root CA X3 verify return:1 depth=1 C = US, O = Let's Encrypt, CN = Let's Encrypt Authority X3 verify return:1 depth=0 CN = www.debian.org verify return:1 HTTP/1.1 200 OK Date: Mon, 28 Dec 2020 23:09:01 GMT Server: Apache Content-Location: index.en.html Vary: negotiate,accept-language,Accept-Encoding,cookie TCN: choice X-Content-Type-Options: nosniff X-Frame-Options: sameorigin Referrer-Policy: no-referrer X-Xss-Protection: 1 Strict-Transport-Security: max-age=15552000 Upgrade: h2,h2c Connection: Upgrade Last-Modified: Sun, 27 Dec 2020 19:27:21 GMT ETag: "36b1-5b777257b5a41" Accept-Ranges: bytes Content-Length: 14001 Cache-Control: max-age=86400 Expires: Tue, 29 Dec 2020 23:09:01 GMT X-Clacks-Overhead: GNU Terry Pratchett Content-Type: text/html Content-Language: en <!DOCTYPE HTML PUBLIC "-//W3C//DTD HTML 4.01//EN" "http://www.w3.org/TR/html4/strict.dtd"> <html lang="en"> <head> <meta http-equiv="Content-Type" content="text/html; charset=utf-8"> <title>Debian -- The Universal Operating System </title> ... ... </div> <!--/UdmComment--> </div> <!-- end footer --> </body> </html>

After a timeout we get back our prompt.

So, we see that the server reacts properly for the end of line characters used, namely “\r\n” after “GET / HTTP/1.1” and after “Host: www.debian.org” plus after an empty line (leading to 2 “\r\n” at the end of the request).

But if we this change to

myself@mytux:~> (echo -ne "GET / HTTP/1.1\nHost: www.debian.org\r\n\r\n") | openssl s_client -tls1_2 -quiet -connect www.debian.org:443

we run into a “HTTP/1.1 400 Bad Request” answer again

(Note the “\n” before “Host: …”)

Obviously, our “openssl s_client” interface works with LF-characters when pressing the “ENTER”-key on the command line. Well, the interface uses the typical Linux/Unix-style for EOLs …

By the way:

Some servers set very short timeouts for receiving all request lines – you may have difficulties with typing fast enough. Then the “trick” with an echo-command and a pipe is very useful to test the server none the less.

What is the reason for the unequal behavior of different servers?

The correct way to build HTTP-Requests is described e.g. in “www.tutorialspoint.com (http/http_requests.htm)“; I quote:

- A Request-line

- Zero or more header (General|Request|Entity) fields followed by CRLF

- An empty line (i.e., a line with nothing preceding the CRLF) indicating the end of the header fields

- Optionally a message-body

According to RFC7230 a HTTP-Request line has the following format:

request-line = method SP request-target SP HTTP-version CRLF

However, in section 3.5 the named RFC also says:

Although the line terminator for the start-line and header fields is the sequence CRLF, a recipient MAY recognize a single LF as a line terminator and ignore any preceding CR.

So, this explains the different behavior of some web-servers to the commands sent with “openssl s_client”.

What can we do with “openssl s_client” to enforce a CRLF as the end of lines when pressing the “ENTER”-key?

The ”

openssl s_client” has a lot of options. You find an overview at the following URI:

https://zoomadmin.com / HowToLinux / LinuxCommand / s_client

The required option for our purpose is “-crlf“; we thus arrive at:

openssl s_client <strong>-quiet -crlf</strong> -tls1_2 -connect YOUR_TARGET_WEB_DOMAIN:443

as the right way to bring RFC compliant web-servers to answer without error messages to manually sent HTTP requests within “openssl s_client”.

Prepare your terminal for long answers from the server

Some web-sites may present long web pages. The HTTP/HTML code sent to you as an answer may be pretty long. As most Linux terminals limit the scroll-able output length by default you should look out for settings that allow for long or infinite output within the terminal emulation of your choice. KDE’s “konsole” e.g. offers you an option to allow for scrolling output with unlimited length. A noteworthy side-effect is, however, that the contents is saved unencrypted in temporary files (for which you can define a location on your PC). So, be a bit careful when using such options.

Conclusion

Using CRLF is the RFC defined way to properly end HTTP request and header field lines. (… whether we from the Linux side may like this MS influenced definition or not …). To enforce an “openssl s_client” to interpret the signal from an “ENTER”-key as “CRLF” (instead of “LF”) we should use the option “-crlf” when opening “s_client”. The additional options “-ign_eof” or “-quiet” are useful to prevent a shutdown of the connection before the server’s answer is fully displayed.

Links

https://zoomadmin.com/HowToLinux/LinuxCommand/s_client

https://stackoverflow.com/questions/5757290/http-header-line-break-style

https://tools.ietf.org/html/rfc7230#section-3.5

https://www.tutorialspoint.com/http/http_requests.htm

https://www.tutorialspoint.com/http/http_quick_guide.htm