My present experiments with Blender on my old laptop take considerable time to render- especially animations. So, I got interested in whether rendering on the laptop’s old Nvidia card, a GT 645M, would make a difference in comparison to rendering on the available 8 hyperthreaded cores of the CPU. The laptop’s CPU is an old one, too, namely an i7-3632QM. The laptop’s operative system is Opensuse Leap 15.3. The system uses Optimus technology. To switch between the Nvidia card and the Intel graphics I invoke Suse’s Prime Select application on KDE.

I got a factor of 2 up to 5.2 faster rendering on the GPU in comparison to the CPU. The difference depends on multiple factors. The number of CPU cores used is an important one.

How to activate GPU rendering in Blender?

Basically three things are required: (1) A working recent Nvidia driver (with compute components) for your graphics card. (2) A certain setting in Blender’s preferences. (3) A setting for the Cycles renderer.

Regarding the CUDA toolkit I quote from Blender’s documentation

Normally users do not need to install the CUDA toolkit as Blender comes with precompiled kernels.

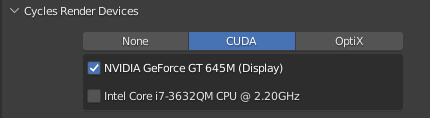

With respect to required Blender settings one has to choose a CUDA capable device via the menu point “Preferences >> System”:

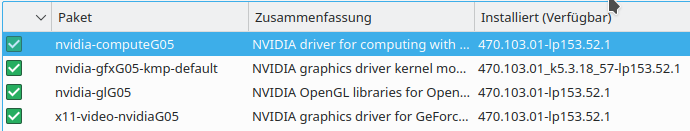

You may also select both the GPU and the CPU. Then rendering will be done both on the GPU and the CPU. My graphics card unfortunately only understands a low level of CUDA instructions. The Nvidia driver I used is of version 470.103.01, installed via Opensuse’s Nvidia community repository:

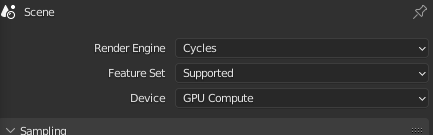

In addition, you must set an option for the Cycles renderer:

With all these settings I got a factor of 2 up to > 6 faster rendering on the GPU in comparison to a CPU with multiple cores.

The difference in performance, of course, depends on

- the number of threads used on the CPU with 8 (hyperthreaded) cores available to the Linux OS

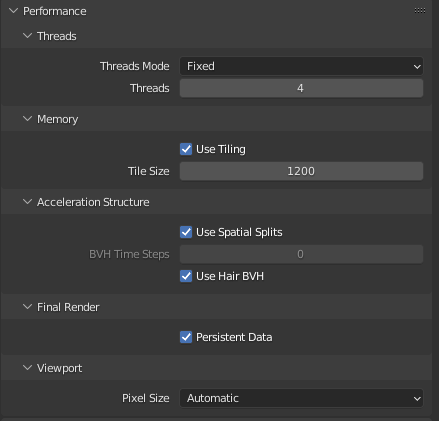

- tiling – more precisely the “tile size” – in case of the GPU and the CPU

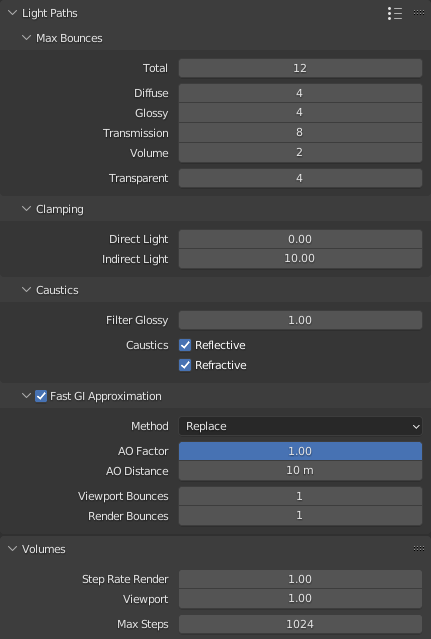

All other render options with the exception of “Fast G” were kept constant during the experiments.

Scene Setup

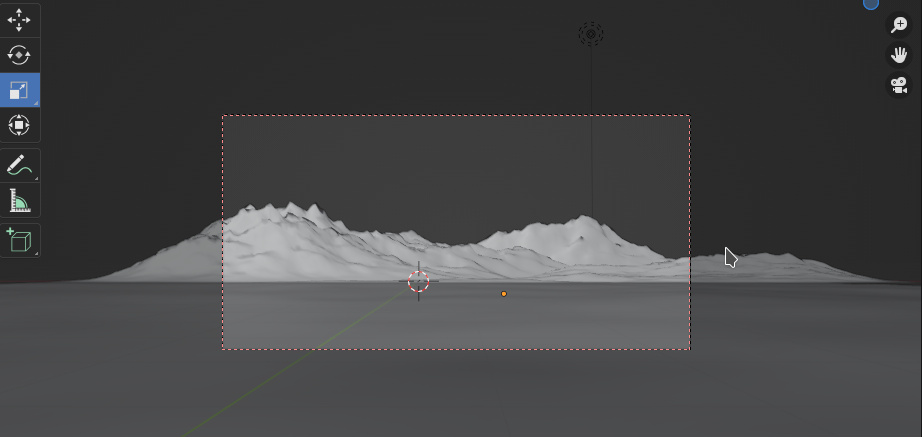

To give the Blender’s Cylces renderer something to do I set up a scene with the following elements:

- a mountain-like landscape (via the A.N.T Landscape Add-On) with a sub-dividion of 256 to 128 – plus subdivision modifier (Catmull-Clark, render level 2, limit surface quality 3) – plus simple procedural texture with some noise and bumps

- a plane with an “ocean” modifier (no repetition, waves + noisy bump texture for the normal to simulate waves)

- a world with a sky texture of the Nishita type ( blue sky by much oxygen, some dust and a sun just above the horizon)

The scene looked like

The central red rectangle marks the camera perspective and the area to be rendered. With 80 samples and a resolution of 1200×600 we get:

The hardest part for the renderer is the reflection on the water (Ocean with wave and texture). Also the “landscape” requires some time. The Nishita world (i.e. the sky with the sun), however, is rendered pretty fast.

Required time for rendering on multiple CPU cores

I used 40 samples to render – no denoising, progressive multi-jitter, 0 minimum bounces.

Other settings can be found here:

The number of threads, the tile size and the use of the Fast CI approximation were varied.

The resolution was chosen to be 1200×600 px.

All data below were measured on a flatpak installation of Blender 3.1.2 on Opensuse Leap 15.3.

| tile size | threads | Fast GI | time |

| 64 | 2 | no | 82.24 |

| 128 | 2 | no | 81.13 |

| 256 | 2 | no | 81.01 |

| 32 | 4 | no | 45.63 |

| 64 | 4 | no | 43.73 |

| 128 | 4 | no | 43.47 |

| 256 | 4 | no | 43.21 |

| 512 | 4 | no | 44.06 |

| 128 | 8 | no | 31.25 |

| 256 | 8 | no | 31.04 |

| 256 | 8 | yes | 26.52 |

| 512 | 8 | no | 31.22 |

A tile size of 256×256 seems to provide an optimum regarding rendering performance. In my experience this depends heavily on the scene and the chosen image resolution.

“Fast GI” gives you a slight, but noticeable improvement. The differences in the rendered picture could only be seen in relatively tiny details of my special test case. It may be different for other scenes and illumination.

Note: With 8 CPU cores activated my laptop was stressed regarding CPU temperature: It went up to 81° Celsius.

Required time for rendering on the mobile GPU

Below are the time consumption data for rendering on the mobile Nvidia GPU 645M:

| tile size | Fast GI | time |

| 64 | no | 18.3 |

| 128 | no | 16.47 |

| 256 | no | 15.56 |

| 512 | no | 15.41 |

| 1024 | no | 15.39 |

| 1200 | no | 15.21 |

| 1200 | yes | 12.80 |

Bigger tile sizes improve the GPU rendering performance! This may be different for rendering on a CPU, especially for small scenes. There you have to find an optimum for the tile size. Again, we see an effect of Fast GI.

Note: The temperature of the mobile graphics card never rose above 58° Celsius. I measured this whilst rendering a much bigger image of 4800×2400 px. I therefore think that the temperature stress Blender rendering exerts on the GPU is relatively smaller in comparison to the heat stress on a CPU.

Required time for rendering both on the CUDA capable mobile GPU and the CPU

As the CPU is CUDA capable one can activate CUDA based rendering on the CPU in addition to the GPU in the “preferences” settings. With 4 CPU cores this brings you down to around 11 secs, with 8 cores down to 10 secs.

| tile size | threads | Fast GI | time |

| 64 | 4 | no | 11.01 |

| 128 | 8 | no | 10.08 |

Conclusion

Even on an old laptop with Optimus technology it is worthwhile to use a CUDA capable Nvidia graphics card for Cycles based rendering in Blender experiments. The rise in temperature was relatively low in my case. The gain in performance may range from a factor 2 to 5 depending on how many CPU cores you can invoke without overheating your laptop.

Ceterum censeo: The worst living fascist and war criminal today, who must be isolated, denazified and imprisoned, is the Putler.