In my last post I set up a Pandas dataframe with a column containing a (German) wordlist of around 2.2 million words. We created a unique string based index for the dataframe from a column wither “lower case” writing of the words. My eventual objectives are

- to find out whether a string like token out of some millions of tokens is a member of the wordlist or not,

- to compare n-grams of characters, i.e. (sub-strings) of millions of given strange string tokens with the n-grams of each word in the wordlist.

In the first case a kind of “existence-query” on the wordlist is of major importance. We could work with a condition on a row-value or somehow use the string based index itself. For the second objective we need a requests on column values with “OR” conditions or again a kind of existence-queries on individual columns, which we turn into index structures before.

It found it interesting and a bit frustrating that a lot of introductory articles on the Internet and even books do not comment on performance. In this article we, therefore, compare the performance of different forms of simple data requests on a Pandas dataframe. To learn a bit more about Pandas’ response times, we extend the data retrieval requests a bit beyond the objectives listed above: We are going to look for rows where conditions for multiple words are fulfilled.

For the time being we restrict our experiments to a dataframe with just one UNIQUE index. I.e. we do not, yet, work with a multi-index. However, at the end of this article, I am going to look a bit at a dataframe with a NON-UNIQUE index, too.

Characteristics of the dataframe and the “query”

We work on a Pandas dataframe “dfw_smallx” with the following characteristics:

pdx_shape = dfw_smallx.shape

print("shape of dfw_smallx = ", pdx_shape)

pdx_rows = pdx_shape[0]

pdx_cols = pdx_shape[1]

print("rows of dfw_smallx = ", pdx_rows)

print("cols of dfw_smallx = ", pdx_cols)

print("column names", dfw_smallx.columns)

print("index", dfw_smallx.index)

print("index is unique: ", dfw_smallx.index.is_unique)

print('')

print(dfw_smallx.loc[['aachener']])

shape of dfw_smallx = (2188246, 3)

rows of dfw_smallx = 2188246

cols of dfw_smallx = 3

column names Index(['lower', 'word', 'len'], dtype='object')

index Index(['aachener', 'aachenerin', 'aachenerinnen', 'aachenern', 'aacheners',

'aachens', 'aal', 'aale', 'aalen', 'aales',

...

'zynisches', 'zynischste', 'zynischsten', 'zynismus', 'zypern',

'zyperns', 'zypresse', 'zypressen', 'zyste', 'zysten'],

dtype='object', name='indw', length=2188246)

index is unique: True

lower word len

indw

aachener aachener AACHENER 8

The only difference to the dataframe created in the last article is the additional column “lower”, repeating the index. As said, the string based index of this dataframe is unique (checked by dfw_smallx.index.is_unique). At the end of this post we shall also have a look at a similar dataframe with a non-unique index.

Query: For a comparison we look at different methods to answer the following question: Are there entries for the words “null”, “mann” and “frau” in the list?

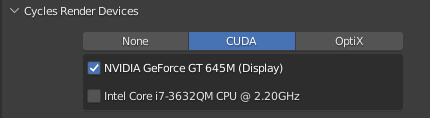

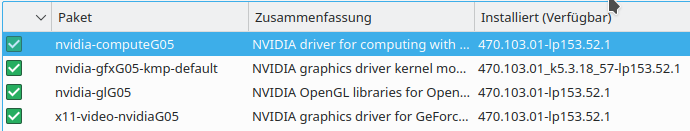

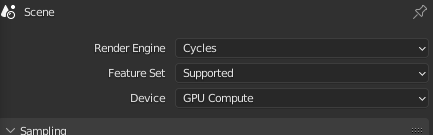

We apply each methods a hundred times to get some statistics. I did the experiments on a CPU (i7-6700K). The response time depends a bit on the background load – I took the best result out of three runs.

Unique index: CPU Time for checking the existence of an entry within the index itself

There is a very simple answer to the question, of how one can check the existence of a value in a (string based) index of a Pandas dataframe. We just use

r

“(‘STRING-VALUE‘ in df.index)” !

Let us apply this for three values

b1 = 0; b2=0; b3=0;

v_start_time = time.perf_counter()

for i in range(0, 100):

if 'null' in dfw_smallx.index:

b1 = 1

if 'mann' in dfw_smallx.index:

b2=1

if 'frau' in dfw_smallx.index:

b3=1

v_end_time = time.perf_counter()

print("Total CPU time ", v_end_time - v_start_time)

b4 = 'gamling' in dfw_smallx.index

print(b1, b2, b3, b4)

Total CPU time 0.00020675300038419664

NULL

1 1 1 False

Giving me a total time on my old PC of about 2.1e-4 secs. Which – as we are going to see is a pretty good value – for Pandas!

Total time 2.1e-4 secs. Per query: 6.9e-7 secs.

Unique index: CPU Time for checking the existence of an entry with a Python dictionary

It is interesting to compare the query time required for a simple dictionary.

We first create a usable dictionary with the lower case word strings as the index:

ay_voc = dfw_smallx.to_numpy()

print(ay_voc.shape)

print(ay_voc[0:2, :])

ay_lower = ay_voc[:,0].copy()

d_lower = dict(enumerate(ay_lower))

d_low = {y:x for x,y in d_lower.items()}

print(d_lower[0])

print(d_low['aachener'])

(2188246, 3)

[['aachener' 'AACHENER' 8]

['aachenerin' 'AACHENERIN' 10]]

aachener

0

And then:

b1 = 0; b2 = 0; b3 = 0

v_start_time = time.perf_counter()

for i in range(0, 100):

if 'null' in d_low:

b1 = 1

if 'mann' in d_low:

b2=1

if 'frau' in d_low:

b3=1

v_end_time = time.perf_counter()

print("Total CPU time ", v_end_time - v_start_time)

print(b1, b2, b3)

print(d_low['mann'], d_low['frau'])

Total CPU time 8.626400085631758e-05

1 1 1

1179968 612385

Total time 8.6e-5 secs. Per query: 2.9e-7 secs.

A dictionary is by almost a factor of 2.4 faster regarding a verification of the existence of a given string value in a string based index than related queries on an indexed Pandas series or dataframes!

Unique index: CPU Time for direct “at”-queries by providing individual index values

Now, let us start with repeated query calls on a our dataframe with the “at”-operator:

v_start_time = time.perf_counter()

for i in range(0, 100):

wordx = dfw_smallx.at['null', 'word']

wordy = dfw_smallx.at['mann', 'word']

wordz = dfw_smallx.at['frau', 'word']

v_end_time = time.perf_counter()

print("Total CPU time ", v_end_time - v_start_time)

Total CPU time 0.0013257559985504486

Total time: 1.3e-3 secs. Per query: 4.4e-6 secs

This approach is by factors 6.5 and 15 slower than the fastest solutions for Pandas and the dictionary, respectively

Unique index: CPU Time for direct “loc”-queries by providing individual index values

We now compare this with the same queries – but with the “loc”-operator:

v_start_time = time.perf_counter()

for i in range(0, 100):

wordx = dfw_smallx.loc['null', 'word']

wordy = dfw_smallx.loc['mann', 'word']

wordz = dfw_smallx.loc['frau', 'word']

v_end_time = time.perf_counter()

print("Total CPU time ", v_end_time - v_start_time)

Total CPU time 0.0021894429992244113

NULL

Total time: 2.2e-3 secs. &

nbsp; Per query: 7.3e-6 secs

More than a factor of 10.6 and 25.4 slower than the fastest Pandas solution and the dictionary, respectively.

Unique index: CPU Time for a query based on a list of index values

Now, let us use a list of index values – something which would be typical for a programmer who wants to save some typing time:

# !!!! Much longer CPU time for a list of index values

inf = ['null', 'mann', 'frau']

v_start_time = time.perf_counter()

for i in range(0, 100):

wordx = dfw_smallx.loc[inf, 'word'] # a Pandas series

v_end_time = time.perf_counter()

print("Total CPU time ", v_end_time - v_start_time)

print(wordx)

Total CPU time 0.037733839999418706

indw

null NULL

mann MANN

frau FRAU

Name: word, dtype: object

Total time: 3.8e-2 secs. Per query: 1.3e-4 secs

More than a factor of 182 and 437 slower than the fastest Pandas solution and the dictionary, respectively.

Unique index: CPU Time for a query based on a list of index values with index.isin()

We now try a small variation by

ix = dfw_smallx.index.isin(['null', 'mann', 'frau'])

v_start_time = time.perf_counter()

for i in range(0, 100):

wordx = dfw_smallx.loc[ix, 'word']

v_end_time = time.perf_counter()

print("Total CPU time ", v_end_time - v_start_time)

Total CPU time 0.058356915000331355

Total time: 5.8e-2 secs. Per query: 1.9e-4 secs

We are loosing ground, again.

More than a factor of 282 and 667 slower than the fastest Pandas solution and the dictionary, respectively.

Unique index: CPU Time for a query based on a list of index values with index.isin() within loc()

Yet, another seemingly small variation

inf = ['null', 'mann', 'frau']

v_start_time = time.perf_counter()

for i in range(0, 100):

wordx = dfw_smallx.loc[dfw_smallx.index.isin(inf), 'word']

v_end_time = time.perf_counter()

print("Total CPU time ", v_end_time - v_start_time)

Total CPU time 6.466159620998951

OOOOPs!

Total time: 6.48 secs. Per query: 2.2e-2 secs

More than a factor of 31000 and 75000 slower than the fastest Pandas solution and the dictionary, respectively.

Unique index: Query by values for a column and ignoring the index

What happens if we ignore the index and query by values of a column?

v_start_time = time.perf_counter()

for i in range(0, 100):

pdq = dfw_smallx.query('indw == "null" | indw == "mann" | indw == "frau" ')

#print(pdq)

w1 = pdq.iloc[0,0]

w2 = pdq.iloc[1,0]

w3 = pdq.iloc[2,0]

v_end_time = time.perf_counter()

print("Total CPU time ", v_end_time - v_start_time)

Total CPU time 16.747838952000166

Well, well – the worst result so far!

Total time: 16.75 secs. Per query: 5.6e-2 secs

More than a factor of 81000 and 194000 slower than the fastest Pandas solution and the dictionary, respectively.

The following takes even longer:

v_start_time = time.perf_counter()

for i in range(0, 100):

word3 = dfw_smallx.loc[dfw_smallx['word'] == 'NULL', 'word']

word4 = dfw_smallx.loc[dfw_smallx['word'] == 'MANN', 'word']

word5 = dfw_smallx.loc[dfw_smallx['word'] == 'FRAU', 'word']

w4 = word3.iloc[0]

w5 = word4.iloc[0]

w6 = word4.iloc[0]

v_end_time = time.perf_counter()

print("Total CPU time ", v_end_time - v_start_time)

r

Total CPU time 22.6538158809999

Total time: 22.76 secs. Per query: 7.6e-2 secs

More than a factor of 109000 and 262000 slower than the fastest Pandas solution and the dictionary, respectively.

However:

v_start_time = time.perf_counter()

for i in range(0, 100):

pdl = dfw_smallx.loc[dfw_smallx['word'].isin(['MANN', 'FRAU', 'NULL' ]), 'word']

w6 = pdl.iloc[0]

w7 = pdl.iloc[1]

w8 = pdl.iloc[2]

v_end_time = time.perf_counter()

print("Total CPU time ", v_end_time - v_start_time)

This gives us 6.576 secs again.

Unique index: Query by values of a dictionary and ignoring the index

Here a comparison to a dictionary is interesting again:

v_start_time = time.perf_counter()

b1 = 0; b2 = 0; b3 = 0

for i in range(0, 100):

if 'null' in d_lower.values():

b1 = 1

if 'mann' in d_lower.values():

b2=1

if 'frau' in d_lower.values():

b3=1

v_end_time = time.perf_counter()

print("Total CPU time ", v_end_time - v_start_time)

print(b1, b2, b3)

Total CPU time 4.572028649003187

1 1 1

So a dictionary is faster than Pandas – even if we ignore the index and query for certain values!

Intermediate conclusions

What do the above results tell us about the handling of Pandas dataframes or series with strings as elements and an unique index containing strings, too?

The first thing is:

If possible do not use a Pandas dataframe at all! Turn (two) required (string) columns of the dataframe into a string indexed dictionary!

In our case a simple solution was to turn a column with lower case writings of the words into a dictionary index and using an enumerated column as values.

A dictionary with string based keys will give you by far the fastest solution if you are only interested in the existence of a certain key-value.

Now, if you want to use Pandas to check the existence of a certain string in a unique index or column the following rules should be followed:

- Whenever possible use a (unique) index based on the strings whose existence you are interested in.

- For pure existence checks of a string in the index use a query of the type

“if ‘STRING‘ in df.index”.

This will give you the fastest solution with Pandas.

- Whenever possible use a series of simple queries – each for exactly one index value and one or multiple column labels instead of providing multiple index values in a list.

- If you want to use the “at” or “loc”-operators, prefer the “at”-operator for a unique index! The form should be

result = df.at[‘IndexValue’, ‘ColLabel’].

The loc-operator

result = df.loc[‘IndexValue’, ‘colLabel1’, ‘colLabel2’, …] is somewhat slower, but the right choice if you want to retrieve multiple columns for a single row index value.

- Avoid results which themselves become Pandas dataframes or series – i.e. results which contain a multitude of rows and column values

- Avoid queries on column-values! The CPU times to produce results depending on conditions for a column may vary; factors between 6 and 200,000 in comparison to the fastest solution for single values are possible.

Using a string based index in a Pandas dataframe is pretty fast because Pandas then uses a hash-function and a hashtable to index datarows. Very much like Python handles dictionaries.

Dataframes with a non-unique string index

Let us now quickly check what happens if we turn to a vocabulary with a non-unique

string based index. We can easily get this from the standard checked German wordlist provided by T.Brischalle.

dfw_fullx = pd.read_csv('/py/projects/CA22/catch22/Wortlisten/word_list_german_spell_checked.txt', dtype='str', na_filter=False)

dfw_fullx.columns = ['word']

dfw_fullx['indw'] = dfw_fullx['word']

pdfx_shape = dfw_fullx.shape

print('')

print("shape of dfw_fullx = ", pdfx_shape)

pdfx_rows = pdfx_shape[0]

pdfx_cols = pdfx_shape[1]

print("rows of dfw_fullx = ", pdfx_rows)

print("cols of dfw_fullx = ", pdfx_cols)

giving:

shape of dfw_fullx = (2243546, 2)

rows of dfw_fullx = 2243546

cols of dfw_fullx = 2

We set an index as before by lowercase word values:

dfw_fullx['indw'] = dfw_fullx['word'].str.lower()

dfw_fullx = dfw_fullx.set_index('indw')

word_null = dfw_fullx.loc['null', 'word']

word_mann = dfw_fullx.loc['mann', 'word']

word_frau = dfw_fullx.loc['frau', 'word']

print('')

print(word_null)

print('')

print(word_mann)

print('')

print(word_frau)

Giving:

indw

null null

null Null

null NULL

Name: word, dtype: object

indw

mann Mann

mann MANN

Name: word, dtype: object

indw

frau Frau

frau FRAU

Name: word, dtype: object

You see directly that the index is not unique.

Non-unique index: CPU Time for direct queries by with single index values

We repeat our first experiment from above :

v_start_time = time.perf_counter()

for i in range(0, 100):

pds1 = dfw_fullx.loc['null', 'word']

pds2 = dfw_fullx.loc['mann', 'word']

pds3 = dfw_fullx.loc['frau', 'word']

v_end_time = time.perf_counter()

print("Total CPU time ", v_end_time - v_start_time)

print(pds1)

This results in:

indw

null null

null Null

null NULL

Name: word, dtype: object

TotalCPU time 7.232291821999752

7.2 secs! Not funny!

The reason is that the whole index must be checked for entries of the given value.

Why the whole dataset? Well, Pandas cannot be sure that the string based index is sorted in a way!

Non-unique index: CPU Time for direct queries by single index values and a sorted index

We remedy the above problem by sorting the index:

dfw_fullx = dfw_fullx.sort_index(axis=0)

And afterwards we try our test from above again – this time giving us :

indw

null Null

null null

null NULL

Name: word, dtype: object

Total CPU time 0.04120599599991692

0.041 secs – much faster. On average the response time now depends on log(N) because Pandas now can use a binary search – with N being the number of elements in the index.

Conclusions

For me as a beginner with Pandas the multitude of options to retrieve values from a Pandas series or dataframe was confusing and their relation to index involvement and the construction of the “result set” was not always obvious. Even more surprising, however, was the impact on performance:

As soon as you hit multiple rows by a “query” a Pandas series or dataframe is constructed which contains the results. This may have advantages regarding the presentation of the result data and advantages regarding a convenient post-query handling of the results. It is however a costly procedure in terms of CPU time. In database language:

For Pandas building the result set can become more costly than the query itself – even for short result sets. This was in a way a bit disappointing.

If you are interested in just the existence of a certain string values in a list of unique strings just create a

dictionary – indexed by your string values. Then check the existence of a string value by “(‘STRING-VALUE‘ in dict)”.

You do not need Pandas or this task!

When you want to use an (indexed) Pandas for existence checks then query like “if ‘STRING-VALUE‘ in df.index” to check the existence of a string value in the properly created string based index.

When you need a bunch of values in various columns for given indices go for the “at”-operator and individual queries for each index value – and not a list.

When retrieving values you should, in general, use a direct form of “selecting” by just one index value and (if possible) one column value with the “at”-operator (df.at[‘indexValue’, ‘columnLabel’]). The loc-operator is up to a factor 1,7 slower – but relevant if you query for more than one columns.

Non-unique indices cost time, too. If you have no chance to associate your dataframe with unique index that suits your objectives then sort the non-unique index at least.